- Home

- Splunk Certifications

- SPLK-1003 Splunk Enterprise Certified Admin Dumps

Pass Splunk SPLK-1003 Exam in First Attempt Guaranteed!

Get 100% Latest Exam Questions, Accurate & Verified Answers to Pass the Actual Exam!

30 Days Free Updates, Instant Download!

SPLK-1003 Premium Bundle

- Premium File 220 Questions & Answers. Last update: May 21, 2026

- Training Course 187 Video Lectures

- Study Guide 519 Pages

Last Week Results!

Includes question types found on the actual exam such as drag and drop, simulation, type-in and fill-in-the-blank.

Based on real-life scenarios similar to those encountered in the exam, allowing you to learn by working with real equipment.

Developed by IT experts who have passed the exam in the past. Covers in-depth knowledge required for exam preparation.

All Splunk SPLK-1003 certification exam dumps, study guide, training courses are Prepared by industry experts. PrepAway's ETE files povide the SPLK-1003 Splunk Enterprise Certified Admin practice test questions and answers & exam dumps, study guide and training courses help you study and pass hassle-free!

Navigating the SPLK-1003 Exam: Splunk Enterprise Administration Made Simple

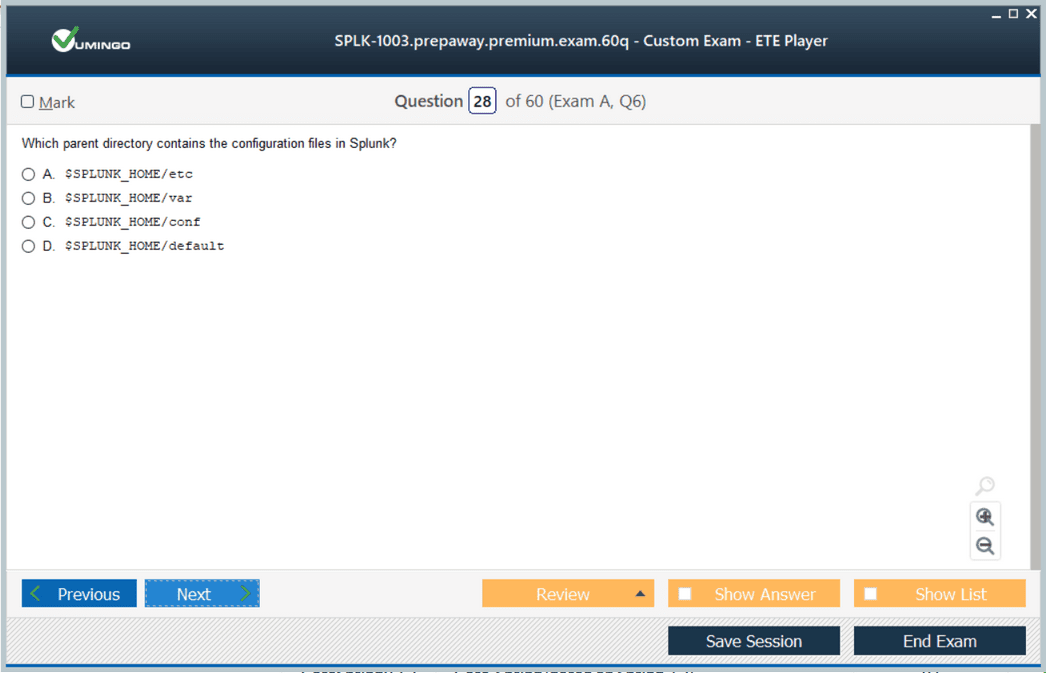

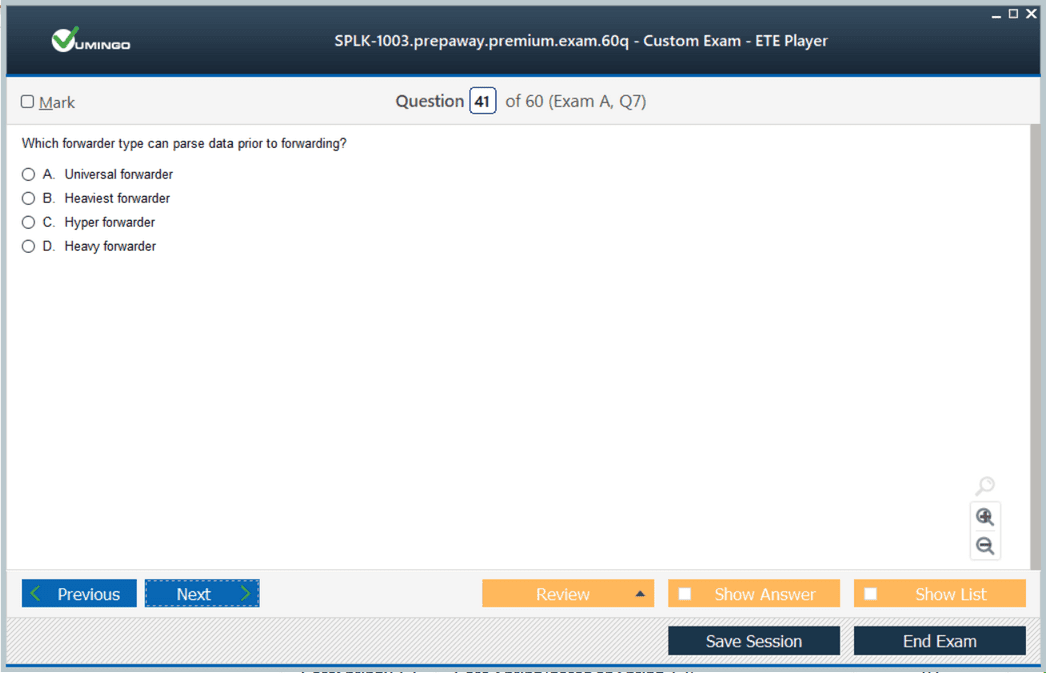

The SPLK-1003 Splunk Enterprise Certified Admin exam focuses on validating the practical skills needed to manage and maintain a Splunk environment efficiently. One of the foundational areas is understanding the architecture of Splunk Enterprise. The platform is built on components including forwarders, indexers, and search heads, each serving distinct roles in collecting, processing, and querying data. Forwarders gather data from various sources and send it to indexers, which store and organize the data for efficient retrieval. Search heads provide a user interface for executing searches, creating dashboards, and generating reports. Candidates must comprehend how these components interact to ensure optimized data flow, high availability, and scalable deployment strategies

Deployment Strategies and Environment Setup

A core focus of the SPLK-1003 exam is deploying Splunk in a way that maximizes performance and reliability. Administrators must understand the installation procedures for standalone and distributed deployments, including considerations for system resources, network configuration, and security settings. Proper deployment planning involves defining the roles of indexers, search heads, and forwarders to balance workload and ensure fault tolerance. Candidates are expected to configure server classes, set up deployment servers, and manage forwarder management to streamline onboarding of new data sources. A thorough understanding of deployment strategies ensures that administrators can maintain a resilient environment capable of supporting large-scale data operations

Data Onboarding and Index Management

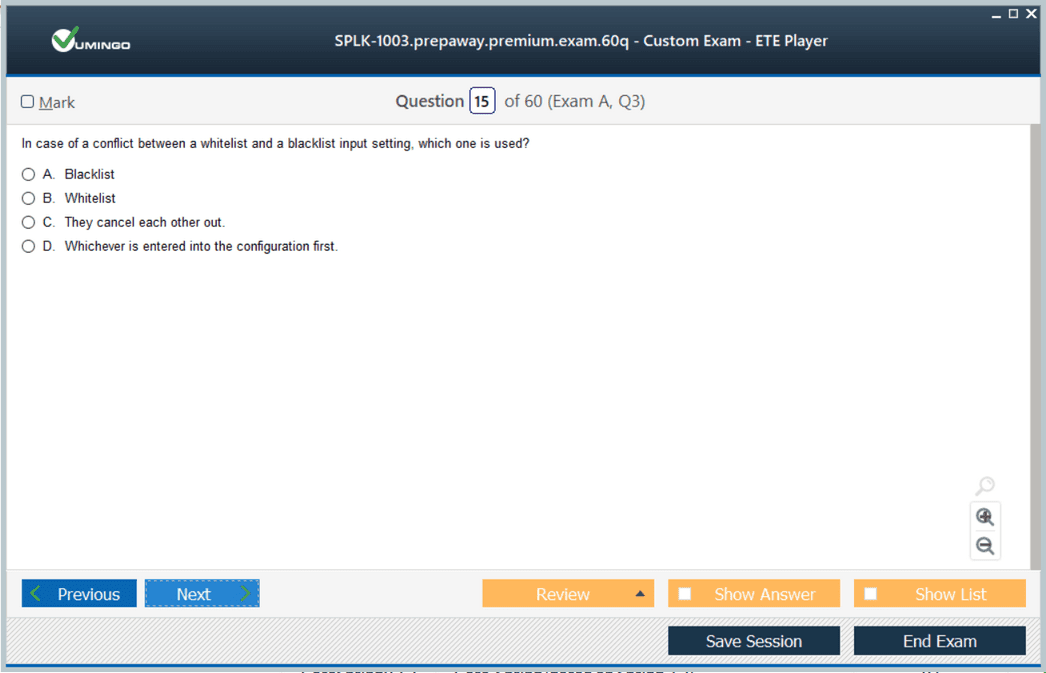

Managing data inputs and indexes is a critical competency for the SPLK-1003 exam. Candidates must understand how Splunk ingests raw data from diverse sources, including log files, metrics, APIs, and streaming data. Knowledge of sourcetypes, host definitions, and index allocation is crucial to maintain organized and retrievable datasets. Administrators should configure inputs properly to ensure data consistency and reduce parsing errors. Index management also involves creating and maintaining indexes, setting retention policies, and monitoring disk usage to prevent performance degradation. Effective data onboarding and index management support efficient searching and accurate reporting across all Splunk components

Search Processing and Query Optimization

Proficiency in searching data using SPL is a central requirement of the SPLK-1003 exam. Administrators must be able to construct searches that retrieve relevant data efficiently while minimizing system load. Understanding the order of command execution, filtering before transforming data, and using indexed fields are essential techniques for optimizing searches. Advanced search practices include using subsearches, summary indexing, and report acceleration to handle large datasets without impacting performance. Candidates must also be capable of troubleshooting slow or incomplete searches, ensuring that dashboards and reports provide timely and accurate insights

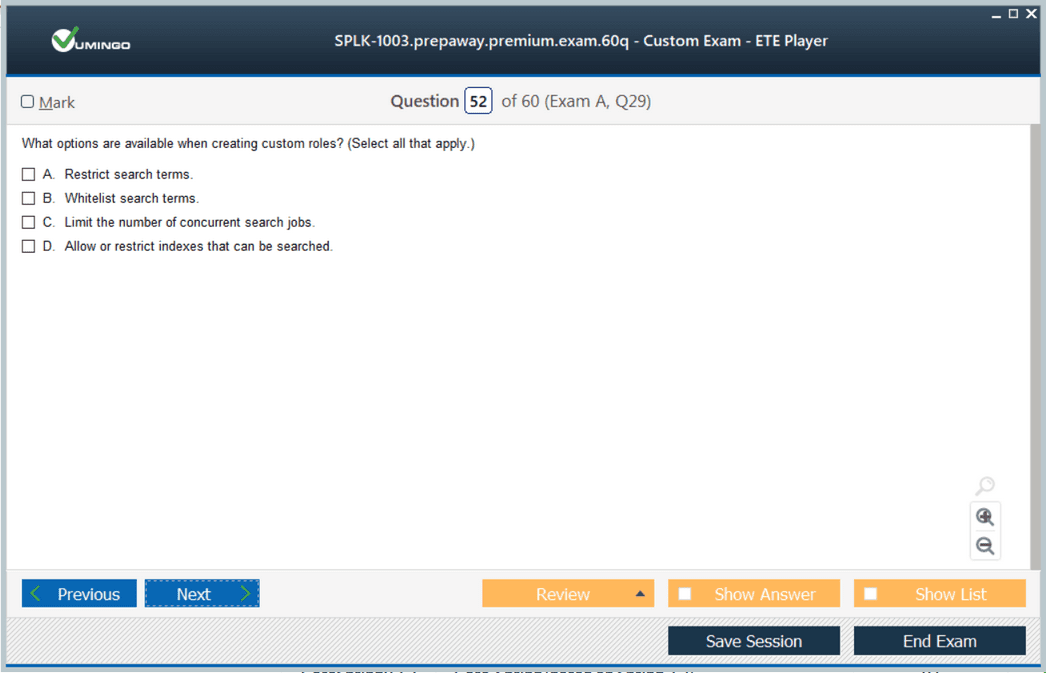

User and Role Administration

Controlling access to Splunk data and functions is a fundamental administrative responsibility. Candidates are expected to create and manage user accounts, define roles, and assign permissions based on organizational requirements. This includes implementing role-based access control to restrict or grant visibility to specific indexes, reports, and dashboards. Administrators should be familiar with authentication methods, including native Splunk authentication, LDAP integration, and single sign-on solutions. Proper user and role management ensures secure access to sensitive data while supporting collaborative workflows within the organization

Monitoring System Health

Maintaining the operational health of a Splunk environment is a critical skill for the SPLK-1003 exam. Administrators must use built-in monitoring tools to track system performance, resource utilization, and event processing rates. This includes monitoring indexing queues, search scheduler activity, and forwarder connectivity. Candidates should be able to interpret metrics and logs to detect anomalies or performance bottlenecks and take corrective action. Proactive monitoring allows administrators to prevent downtime, optimize resource allocation, and maintain the reliability of dashboards and alerts

Backup, Restore, and Maintenance Procedures

Regular maintenance is essential to ensure long-term stability and data integrity. Candidates must understand how to implement backup and restore strategies for configurations, indexes, and critical system files. Routine maintenance tasks include upgrading Splunk software, applying patches, and performing system health checks. Administrators must plan upgrades carefully to minimize downtime, validate system functionality post-upgrade, and ensure that data retention policies are upheld. Effective maintenance procedures reduce the risk of data loss, prevent system failures, and maintain consistent performance across all components

Troubleshooting and Issue Resolution

The SPLK-1003 exam emphasizes the ability to troubleshoot and resolve operational issues efficiently. Candidates should be able to identify common problems such as indexing errors, search delays, and permission conflicts. Using diagnostic tools, logs, and monitoring dashboards, administrators can investigate root causes and implement corrective actions. Best practices include documenting recurring issues, applying systematic approaches to problem-solving, and continuously improving processes to reduce future occurrences. Proficiency in troubleshooting ensures that Splunk environments remain stable, secure, and capable of supporting business-critical functions

Knowledge Object Management

Knowledge objects, including saved searches, macros, event types, and tags, are integral to efficient Splunk administration. Candidates must create reusable objects that standardize searches and reports, improve consistency, and simplify maintenance. Proper management involves organizing objects logically, documenting their purpose, and applying them consistently across dashboards and alerts. Mastery of knowledge object management allows administrators to reduce redundancy, streamline workflows, and provide users with reliable and actionable insights

Data Normalization and Event Enrichment

Data normalization and enrichment are essential for providing context and consistency across datasets. Candidates should be able to implement the Common Information Model to standardize field names and structures, facilitating correlation and analysis. Enrichment techniques, including lookups and calculated fields, add value to raw data and improve reporting accuracy. Understanding how to normalize and enrich events ensures that dashboards and alerts are meaningful, actionable, and aligned with organizational monitoring and reporting objectives

Dashboard Design and Visualization

Creating dashboards that communicate insights effectively is a key aspect of the SPLK-1003 exam. Administrators must combine multiple visualizations such as charts, tables, and event panels to present data clearly. Interactive features such as drilldowns, dynamic filters, and input controls enable users to explore data in depth and respond to trends or anomalies. Effective dashboard design balances clarity, relevance, and performance, providing stakeholders with tools to make informed decisions and monitor operational performance continuously

Alerts and Workflow Automation

Alerts are critical for proactive monitoring of events and system performance. Candidates must configure conditions, thresholds, and schedules to trigger notifications or automated actions. Integration with workflow automation allows alerts to initiate scripts, update dashboards, or communicate with external systems. This capability reduces response times, ensures consistent handling of critical events, and enhances operational efficiency. Administrators must design alerting systems that are actionable, reliable, and aligned with organizational priorities

Search Acceleration and Performance Tuning

Optimizing system performance and search efficiency is essential in large Splunk deployments. Candidates should understand search acceleration techniques such as report acceleration and summary indexing to improve query speed. Index optimization, use of indexed fields, and efficient search design reduce system load and enhance responsiveness. Knowledge of performance tuning ensures that dashboards, alerts, and reports remain reliable under high data volumes, supporting timely decision-making and operational monitoring

Practical Scenario Application

The SPLK-1003 exam emphasizes the application of skills in realistic operational scenarios. Candidates are expected to perform administrative tasks, troubleshoot issues, and manage users and data inputs in environments that simulate real-world conditions. Hands-on practice enables administrators to understand workflows, anticipate challenges, and implement best practices effectively. Practical application ensures that candidates can translate theoretical knowledge into operational proficiency, maintaining a stable and efficient Splunk Enterprise environment

Continuous Skill Development

Achieving proficiency in SPLK-1003 competencies encourages continuous learning and skill refinement. Administrators are expected to keep exploring advanced SPL features, refine dashboards and workflows, and adapt strategies for evolving data challenges. Continuous practice improves troubleshooting efficiency, enhances search optimization, and supports better system monitoring. Ongoing skill development ensures that certified administrators can manage complex environments, support operational intelligence, and contribute to informed decision-making

Integration and Collaboration

Effective Splunk administration requires integration across system components and collaboration with other teams. Candidates must understand how to coordinate forwarders, indexers, and search heads while ensuring consistent user access and knowledge object usage. Collaboration involves sharing dashboards, reports, and alerts with stakeholders, providing insights that drive operational and strategic actions. Understanding integration and teamwork principles ensures administrators can maintain robust environments that meet organizational requirements efficiently

Ensuring Security and Compliance

Administrators must enforce security policies to protect data integrity and control access. This includes implementing role-based access, managing authentication methods, and monitoring for unauthorized activity. Compliance with organizational policies and regulatory standards is essential for safeguarding sensitive information. Proficiency in security practices ensures that data remains confidential, accurate, and accessible only to authorized users, supporting trust and reliability in Splunk operations

Leveraging SPLK-1003 Skills for Organizational Value

Certified administrators demonstrate the ability to transform raw data into actionable insights. By managing data inputs, optimizing searches, configuring alerts, and maintaining dashboards, administrators support operational intelligence, improve monitoring efficiency, and enhance decision-making processes. Mastery of SPLK-1003 competencies ensures that the platform delivers reliable insights and contributes to organizational performance

Preparing Effectively Through Hands-On Practice

Hands-on experience is crucial for mastering SPLK-1003 objectives. Setting up a lab environment, configuring inputs, performing maintenance tasks, and troubleshooting real scenarios develops confidence and proficiency. Practical exercises reinforce theoretical knowledge, ensuring administrators can manage complex datasets, maintain system health, and provide accurate insights consistently

Advanced Indexing and Data Management Techniques

Understanding indexing intricacies, including retention policies, index replication, and event parsing, is central to the exam. Candidates must know how to structure indexes for performance and scalability while ensuring data integrity. Advanced data management techniques allow administrators to maintain high-performance environments, optimize storage, and support rapid retrieval for searching and reporting

Event Correlation and Trend Analysis

Event correlation across multiple data sources enables identification of operational patterns, anomalies, and risks. Candidates must understand how to group related events, apply statistical analysis, and generate reports that highlight trends. Trend analysis supports predictive monitoring, resource planning, and proactive incident response, ensuring that organizations can address challenges before they escalate

Maintenance of Large-Scale Environments

Managing large-scale Splunk deployments requires expertise in scaling indexers, optimizing search heads, and coordinating distributed forwarders. Candidates are expected to plan and implement strategies for high availability, redundancy, and fault tolerance. Efficient management of large environments ensures consistent performance, reliability, and scalability while minimizing operational risk

Reporting and Analytical Capabilities

Generating comprehensive reports is a fundamental administrative task. Administrators must combine multiple searches, apply statistical functions, and create dashboards that summarize operational insights. Advanced reporting techniques include visualizing trends, highlighting anomalies, and providing actionable insights for stakeholders. Proficiency in reporting enhances decision-making and demonstrates the practical value of Splunk data

Final Integration of Skills

The SPLK-1003 exam tests the integration of all administrative skills, from deployment and data management to monitoring, troubleshooting, and security. Candidates must demonstrate the ability to apply knowledge holistically, ensuring that Splunk Enterprise environments operate efficiently, securely, and reliably. Mastery of these integrated competencies allows certified administrators to provide continuous operational value and maintain the effectiveness of Splunk in complex environments

Scaling Splunk Enterprise Deployments

Managing large and distributed Splunk Enterprise deployments is a critical area of focus for the SPLK-1003 exam. Administrators must understand how to design scalable architectures that accommodate growing volumes of data while maintaining performance. This includes deploying multiple indexers and search heads, configuring load balancing, and optimizing forwarder distribution. Knowledge of clustering, replication, and high availability strategies ensures that the environment remains resilient and responsive under high workloads. Effective scaling practices allow administrators to maintain consistent search performance, ensure rapid data indexing, and support multiple concurrent users without degradation

Distributed Search and Search Head Clustering

In complex deployments, distributed search and search head clustering play a pivotal role. Administrators must be able to configure multiple search heads to provide a unified search experience across all indexed data. Understanding how search peers, search head pools, and load balancing work together allows efficient query processing and high availability. Clustering ensures that searches continue to operate seamlessly even if individual components fail. Candidates must be familiar with replication factors, bucket management, and failover mechanisms to maintain search reliability and provide uninterrupted access to data

Indexer Clustering and Data Replication

Indexer clustering is essential for data reliability and fault tolerance. Administrators must configure clusters to replicate data across multiple indexers to prevent loss and support high availability. Understanding peer nodes, master nodes, and replication policies enables effective management of data integrity. Candidates should be able to monitor cluster health, manage bucket copies, and handle node failures to ensure continuous indexing and retrieval capabilities. Proficiency in indexer clustering guarantees that critical data remains protected and accessible, supporting operational continuity and organizational decision-making

Advanced Monitoring and Alert Configuration

Administrators are expected to set up monitoring frameworks that track performance, detect anomalies, and trigger automated alerts. SPLK-1003 focuses on the ability to configure real-time and scheduled alerts for system health metrics, indexing rates, search performance, and resource utilization. Effective alerting requires defining thresholds, understanding dependencies, and integrating with workflow automation to initiate corrective actions. Administrators must ensure that alerts are actionable, minimize false positives, and support proactive management of the environment

Performance Tuning for Large Deployments

Performance tuning is a fundamental aspect of managing enterprise-scale Splunk environments. Candidates must understand how to optimize indexing, searching, and dashboard rendering to maintain responsiveness. Techniques include using indexed fields efficiently, applying search optimization practices, managing summary indexing, and leveraging report acceleration. Administrators should also monitor and tune system resources, including CPU, memory, and storage, to prevent bottlenecks. Performance tuning ensures that the environment delivers consistent results, supports high concurrency, and maintains fast response times for complex queries

Security and Access Control at Scale

Managing access and security across large environments is crucial for protecting sensitive data. Candidates must implement role-based access controls, configure authentication methods, and enforce security policies consistently. This includes integrating with external identity providers, managing user groups, and auditing access to ensure compliance. Administrators should also implement monitoring for suspicious activity and enforce best practices for secure deployment. Comprehensive access control and security management guarantee that only authorized personnel can access critical information while maintaining operational efficiency

Data Integrity and Retention Management

Maintaining data integrity and managing retention policies are essential skills for SPLK-1003 candidates. Administrators must ensure that indexes are configured correctly, data is replicated appropriately, and retention settings align with organizational requirements. Understanding frozen and archived data, index rotation, and storage optimization allows administrators to balance performance, storage costs, and regulatory compliance. Effective retention management ensures that historical data is available for analysis while preventing system performance degradation

Troubleshooting Complex Issues

In enterprise environments, administrators must be prepared to troubleshoot complex issues that can affect indexing, search, and overall system performance. Candidates should be able to identify root causes using diagnostic tools, logs, and monitoring dashboards. Troubleshooting scenarios may include slow searches, data ingestion failures, forwarder connectivity problems, or cluster misconfigurations. Applying systematic approaches, understanding interdependencies, and implementing corrective actions ensure that problems are resolved quickly and efficiently, minimizing operational impact

Workflow Automation and Integration

Workflow automation enhances operational efficiency by enabling Splunk to perform predefined actions based on alerts or search results. Candidates must configure automated workflows that integrate with dashboards, notifications, and external systems. This can include executing scripts, triggering remediation processes, or updating monitoring tools. Workflow automation reduces manual intervention, accelerates response times, and ensures consistent handling of recurring operational tasks. Administrators must ensure workflows are reliable, maintainable, and aligned with operational objectives

Knowledge Object Lifecycle Management

Knowledge objects such as saved searches, macros, event types, and tags are essential for standardizing operations and ensuring consistent reporting. SPLK-1003 emphasizes the ability to create, manage, and organize knowledge objects across distributed environments. Administrators must implement naming conventions, document object usage, and maintain consistency to support collaborative environments. Proper lifecycle management of knowledge objects reduces redundancy, facilitates troubleshooting, and ensures that dashboards, alerts, and reports remain reliable and accurate

Data Normalization and Enrichment at Scale

Handling diverse data sources requires advanced data normalization and enrichment techniques. Candidates must implement calculated fields, lookups, and the Common Information Model to standardize events and facilitate analysis across multiple sources. Enrichment adds context to raw data, enabling more meaningful reporting and operational insight. Administrators must ensure that normalization and enrichment processes are efficient, consistent, and scalable to handle high volumes of events without impacting performance

Advanced Dashboard Design and Interactivity

Creating dashboards that provide clear and actionable insights at scale is a critical skill. Administrators should design dashboards that combine charts, tables, and event panels effectively while maintaining responsiveness in large environments. Interactive features such as drilldowns, input controls, and dynamic filtering enable users to explore data in depth and respond to operational changes quickly. Well-designed dashboards support informed decision-making and provide stakeholders with immediate access to critical metrics

Search Optimization and Query Performance

Optimizing search performance in large-scale deployments requires a deep understanding of SPL, indexing, and resource management. Candidates must implement techniques such as search filtering, summary indexing, report acceleration, and indexed field usage to reduce system load. Administrators should monitor search execution times, identify slow queries, and tune resource allocation to maintain fast and reliable results. Effective search optimization ensures that operational monitoring, dashboards, and reporting remain performant even under high data volumes

Event Correlation and Predictive Analysis

Event correlation allows administrators to detect patterns and anticipate potential issues. Candidates must be able to analyze sequences of events, apply statistical functions, and generate alerts or reports that identify trends. Predictive analysis supports proactive monitoring, helping to prevent outages, optimize resources, and anticipate performance bottlenecks. Understanding correlation techniques and predictive capabilities enhances the value of Splunk as an operational intelligence platform

Proactive Maintenance and System Health Checks

Regular maintenance is essential for sustaining large Splunk environments. Administrators must conduct health checks, perform backups, and validate configurations to prevent issues before they affect operations. Monitoring indexing rates, queue sizes, and system resource usage allows administrators to address potential problems proactively. Structured maintenance practices ensure system reliability, data integrity, and uninterrupted availability of services

Integration with External Tools and Services

Advanced SPLK-1003 administration includes integrating Splunk with external monitoring, alerting, and operational tools. Administrators must configure workflows that communicate with ticketing systems, notification platforms, or custom scripts. Integration extends the capabilities of Splunk, enabling automated responses, centralized monitoring, and streamlined incident management. Effective integration ensures that data insights translate into operational actions quickly and efficiently

Scaling Knowledge Object Usage

In distributed environments, maintaining consistency of knowledge objects is critical. Administrators must replicate saved searches, macros, and event types across multiple search heads and environments. Implementing proper version control, documentation, and object management practices ensures that dashboards, alerts, and reports function correctly across all nodes. Scaling knowledge object usage supports collaboration and maintains operational reliability

Troubleshooting Distributed Environments

Managing issues in clustered or distributed deployments requires specialized troubleshooting skills. Administrators must be able to isolate problems in indexing clusters, search head clusters, or forwarder configurations. Using monitoring dashboards, logs, and diagnostic tools, candidates can identify performance bottlenecks, replication errors, or search inconsistencies. Effective troubleshooting strategies minimize downtime, maintain data availability, and ensure consistent user experience

Continuous Learning and Skill Advancement

The SPLK-1003 exam encourages ongoing skill development. Administrators should regularly explore new SPL functions, optimize workflows, and refine dashboards. Continuous learning allows professionals to adapt to evolving data challenges, implement advanced techniques, and maintain operational excellence. By staying current with best practices and platform capabilities, administrators ensure that Splunk environments remain efficient, reliable, and capable of supporting organizational objectives

Enhancing Operational Intelligence

Certified administrators leverage Splunk to improve operational intelligence across organizations. By combining efficient data management, optimized searches, monitoring, and automated workflows, administrators provide actionable insights that inform decision-making. The SPLK-1003 skill set enables proactive identification of issues, trend analysis, and resource optimization, ensuring that Splunk delivers maximum operational value

Ensuring High Availability and Redundancy

High availability and redundancy are crucial for mission-critical Splunk deployments. Administrators must configure replication, clustering, and failover mechanisms to maintain system uptime. Understanding node roles, bucket replication, and search head failover ensures uninterrupted service. High availability planning allows administrators to minimize the impact of hardware failures, network issues, or configuration errors while maintaining data accessibility and system performance

Advanced Data Management Techniques

Large-scale deployments require advanced data handling capabilities. Administrators must optimize indexing strategies, manage retention policies, and implement archiving processes. Understanding frozen and archived data, event compression, and storage tiering ensures efficient utilization of resources. Effective data management techniques maintain system performance, reduce operational costs, and support long-term data availability

Holistic Application of SPLK-1003 Skills

The SPLK-1003 exam emphasizes the holistic application of administrative skills, combining deployment, indexing, searching, user management, monitoring, troubleshooting, and security. Candidates must demonstrate the ability to integrate these competencies to maintain complex Splunk environments effectively. Mastery of these skills ensures operational continuity, enhances performance, and provides actionable insights to support organizational decision-making

Preparing for Complex Operational Scenarios

Preparation for SPLK-1003 requires engagement with complex operational scenarios that simulate real-world environments. Candidates should practice handling distributed data, performing maintenance tasks, responding to alerts, and troubleshooting system issues. This hands-on experience ensures that administrators can apply theoretical knowledge in practical contexts, making decisions that maintain system integrity and operational efficiency

Reporting and Analytical Excellence

Administrators must develop advanced reporting capabilities, combining multiple searches and statistical functions to generate comprehensive dashboards. Reports should highlight trends, detect anomalies, and provide actionable insights for stakeholders. Analytical excellence ensures that decision-makers have access to timely and accurate data, improving organizational responsiveness and resource allocation

Advanced Troubleshooting Techniques

Managing complex Splunk environments requires a deep understanding of troubleshooting methodologies. Administrators must identify issues across multiple layers of the architecture, including forwarders, indexers, and search heads. Effective troubleshooting involves analyzing system logs, monitoring dashboards, and examining event pipelines to isolate the root cause of problems. Common challenges include search slowdowns, indexing delays, and replication failures. Candidates must be able to apply systematic approaches to resolve these issues efficiently while minimizing operational disruption. Mastery of advanced troubleshooting ensures continuous availability and reliability of Splunk Enterprise

System Performance Analysis

Performance analysis is a critical skill for SPLK-1003 candidates. Administrators should monitor key metrics such as CPU utilization, memory consumption, indexing rates, and search latency. Understanding the relationship between data volume, indexing efficiency, and search performance enables administrators to make informed adjustments. Performance tuning includes optimizing search queries, configuring appropriate index retention policies, and balancing workload across indexers and search heads. Regular analysis and adjustment prevent bottlenecks and maintain fast, reliable data access for all users

Cluster Management and Optimization

In clustered environments, administrators must manage indexer and search head clusters effectively. Indexer clustering ensures data redundancy and high availability by replicating bucket copies across multiple nodes. Candidates need to monitor cluster health, handle node failures, and maintain proper replication factors. Search head clustering supports load balancing and redundancy for search operations, providing seamless access even during node outages. Optimization involves configuring peer and master node roles, monitoring replication queues, and maintaining synchronization to ensure consistent performance and minimal downtime

Security Administration and Compliance

Maintaining secure access to data is an essential part of SPLK-1003 responsibilities. Administrators must implement role-based access control, configure authentication methods, and monitor for unauthorized activity. Security management also involves applying encryption, auditing user actions, and enforcing organizational policies. Compliance with data protection standards ensures that sensitive information is safeguarded. Administrators must balance security measures with usability, ensuring authorized users can access necessary resources without compromising system integrity

Automated Workflows and Operational Efficiency

Workflow automation enhances operational efficiency by reducing manual intervention and enabling rapid response to critical events. Candidates must configure automated processes that trigger based on alert conditions, search results, or system thresholds. Examples include automated notifications, system updates, and integration with external incident management tools. Properly designed workflows improve response times, maintain consistency in operations, and allow administrators to focus on higher-value tasks. Automation ensures that Splunk environments operate efficiently, reliably, and proactively

Knowledge Object Standardization

Managing knowledge objects such as saved searches, macros, and event types is crucial in maintaining consistent operational processes. Administrators must establish conventions for naming, documentation, and usage to ensure uniformity across dashboards and reports. Proper management reduces redundancy, facilitates troubleshooting, and enables collaboration among teams. Standardized knowledge objects improve maintainability and ensure that analytical processes remain consistent and reproducible across the environment

Data Normalization and Lookup Integration

Candidates must demonstrate the ability to normalize data from diverse sources to maintain consistency and facilitate analysis. This involves applying calculated fields, lookups, and the Common Information Model to standardize event structures. Enrichment processes add context and value, enabling more meaningful reporting and trend analysis. Administrators should design normalization and enrichment workflows that scale efficiently, handling high volumes of data without impacting performance. Consistent and enriched datasets support reliable dashboards, alerts, and operational insights

Dashboard Optimization and User Experience

Creating dashboards that are both informative and performant is essential. Administrators must design dashboards that present key metrics clearly, combine multiple visualization types, and maintain responsiveness under heavy data loads. Interactive features such as drilldowns, dynamic filters, and input controls allow users to explore data in depth and make informed decisions. Proper dashboard design balances clarity, relevance, and speed, ensuring that users can quickly access actionable information without performance degradation

Alert Strategy and Incident Response

Configuring alerts is a core competency for SPLK-1003 administrators. Candidates must define alert conditions, thresholds, and schedules to monitor critical system and data events. Alerts should be actionable, minimize false positives, and integrate with automated workflows or external notification systems. Proper alert strategy enables proactive incident response, reduces downtime, and ensures operational continuity. Administrators must maintain a balance between sensitivity and relevance to avoid alert fatigue while ensuring timely detection of issues

Search Efficiency and Resource Management

Optimizing search efficiency is vital for large-scale deployments. Candidates should understand how to construct efficient SPL queries, use indexed fields effectively, and apply summary indexing or report acceleration where appropriate. Resource management includes balancing search load across indexers and search heads, monitoring search queue times, and adjusting concurrency settings. Efficient search management ensures timely data retrieval, reduces system strain, and supports reliable dashboard and alert performance

Event Correlation and Predictive Monitoring

Administrators must correlate events across multiple datasets to detect patterns and predict potential issues. This includes combining data from various sources, applying statistical functions, and identifying trends or anomalies. Predictive monitoring allows proactive intervention before operational issues escalate. Candidates must design searches and alerts that provide actionable insights and support decision-making, ensuring that the environment remains resilient and responsive to emerging conditions

Backup, Restore, and Disaster Recovery Planning

Ensuring data integrity and availability requires robust backup and restore strategies. Administrators must develop processes for regular backups of indexes, configurations, and critical system files. Disaster recovery planning involves defining procedures for system restoration, validating backups, and ensuring minimal data loss during outages. Effective planning enables rapid recovery from failures, maintains business continuity, and supports operational resilience

Integrating Splunk with Enterprise Systems

Integration with external systems extends the functionality of Splunk and enhances operational capabilities. Administrators must configure workflows that interact with ticketing platforms, notification tools, or custom scripts. Integration allows automated responses, centralized monitoring, and streamlined incident management. Proper integration ensures that Splunk functions as a proactive component of enterprise operations, providing insights and triggering actions across organizational systems

Scaling Operational Practices

As Splunk deployments grow, administrators must scale operational practices to maintain performance and reliability. This includes standardizing procedures for user management, knowledge object handling, monitoring, and troubleshooting. Scalable practices ensure consistent performance, maintain security standards, and enable efficient management of increasingly complex environments. Administrators should implement processes that are repeatable, maintainable, and capable of supporting high concurrency and large data volumes

Comprehensive Reporting and Analytics

Advanced reporting and analytics skills are essential for delivering actionable insights. Administrators must combine multiple searches, statistical functions, and visualizations to generate comprehensive reports. Reports should identify trends, highlight anomalies, and provide context for operational decision-making. Effective reporting supports data-driven management, enables proactive interventions, and demonstrates the operational value of Splunk data

Continuous Monitoring and Optimization

Maintaining continuous operational efficiency requires ongoing monitoring and optimization. Administrators must track system performance, analyze usage patterns, and adjust configurations to optimize indexing, searching, and dashboard rendering. Continuous improvement practices ensure that resources are used effectively, system responsiveness remains high, and data availability is maintained. This approach allows administrators to anticipate potential issues and implement improvements proactively

Scenario-Based Skill Application

The SPLK-1003 exam emphasizes practical application in realistic operational scenarios. Candidates should practice managing distributed environments, configuring alerts, performing maintenance tasks, and troubleshooting system issues under simulated conditions. Scenario-based exercises reinforce theoretical knowledge, enhance problem-solving skills, and ensure administrators can respond effectively to complex operational challenges

Proactive System Health Management

Ensuring system health involves routine checks, monitoring metrics, and proactive interventions. Administrators must track indexing performance, search response times, forwarder connectivity, and resource utilization. Proactive management allows early detection of anomalies, minimizing disruptions and maintaining continuous access to critical data. This skill is essential for sustaining high performance and reliability in enterprise environments

Advanced Indexing Strategies

Optimizing indexing strategies is crucial for performance and scalability. Administrators must configure index settings, manage retention policies, and implement replication to ensure data reliability and efficient storage use. Advanced indexing techniques support rapid search, prevent resource bottlenecks, and maintain system stability under heavy data loads

Strategic Use of Knowledge Objects

Knowledge objects enable administrators to standardize processes, improve consistency, and reduce operational overhead. Creating reusable saved searches, macros, event types, and tags ensures that dashboards, alerts, and reports remain accurate and maintainable. Strategic management of knowledge objects supports collaboration, reduces errors, and enhances operational efficiency

Holistic Environment Management

SPLK-1003 administration requires integrating all aspects of system management, including deployment, indexing, searching, user management, monitoring, security, and troubleshooting. Administrators must ensure that all components function cohesively to maintain a reliable, secure, and high-performing environment. Holistic management ensures that Splunk Enterprise delivers continuous operational value and actionable insights for stakeholders

Predictive Analysis and Trend Reporting

Administrators should leverage Splunk’s capabilities to analyze trends and anticipate operational issues. Using historical data, statistical analysis, and correlation techniques, candidates can identify patterns, predict potential failures, and implement proactive measures. Predictive analysis enhances operational readiness, optimizes resource allocation, and ensures that performance metrics are maintained consistently

Ensuring Operational Continuity

Maintaining operational continuity requires a combination of monitoring, alerting, backup strategies, and proactive troubleshooting. Administrators must ensure that all components are functioning, data integrity is maintained, and users have uninterrupted access to critical information. Operational continuity safeguards organizational workflows, supports informed decision-making, and minimizes the risk of disruptions

Preparing for SPLK-1003 Certification

Effective preparation combines theoretical understanding with extensive hands-on experience. Candidates should practice deploying distributed environments, managing users and indexes, configuring alerts and workflows, and troubleshooting scenarios. Focused preparation ensures that administrators can demonstrate proficiency across all SPLK-1003 objectives, applying knowledge practically to maintain high-performing, secure, and resilient Splunk environments

Continuous Skill Enhancement

Ongoing skill development is crucial for administrators managing evolving Splunk environments. Exploring advanced features, refining workflows, optimizing dashboards, and implementing predictive analysis techniques strengthens operational capabilities. Continuous enhancement ensures administrators remain proficient, adaptable, and capable of supporting complex enterprise deployments efficiently

Driving Organizational Insights

Certified administrators translate operational data into actionable insights. By managing inputs, indexes, searches, alerts, and dashboards, administrators provide stakeholders with clear, timely information. SPLK-1003 skills enable proactive monitoring, resource optimization, and informed decision-making, ensuring that Splunk environments contribute maximum value to organizational objectives

High Availability and Disaster Recovery Planning

Ensuring high availability and implementing disaster recovery strategies are central to SPLK-1003 administration. Administrators must design environments that can withstand hardware failures, network interruptions, or node outages without data loss or downtime. This includes configuring indexer clustering for data replication, search head clustering for seamless query operations, and forwarder redundancy to maintain continuous data ingestion. Disaster recovery planning involves defining recovery procedures, validating backups, and establishing failover protocols to restore services rapidly. These strategies ensure uninterrupted access to critical data and maintain operational continuity across the enterprise

Advanced Monitoring Strategies

Effective monitoring is essential for sustaining a robust Splunk environment. Administrators should implement comprehensive monitoring frameworks that track indexing performance, search latency, system resource utilization, and network health. This includes configuring metrics collection, creating health dashboards, and setting proactive alerts for anomalies. By analyzing trends over time, administrators can anticipate potential issues, optimize system performance, and prevent failures before they impact users. Continuous monitoring supports operational efficiency and ensures that all components operate optimally

Optimizing Search Performance

Search performance optimization is a key competency for SPLK-1003 candidates. Administrators must design searches that minimize system resource consumption while delivering accurate results. Techniques include using indexed fields for filtering, summarizing large datasets, and leveraging accelerated reports. Understanding search processing order, efficient SPL query design, and search job scheduling ensures rapid query execution even in environments with high data volume and concurrent user activity. Optimized search performance maintains user productivity and enhances the reliability of dashboards and alerts

Managing Distributed Deployments

Large-scale deployments require expertise in managing distributed environments. Administrators must coordinate multiple indexers, search heads, and forwarders to maintain consistent performance and data integrity. This includes load balancing, replication management, and synchronization of configurations across nodes. Proper management ensures that distributed systems handle growing data volumes efficiently, maintain high availability, and deliver consistent search results to users. Mastery of distributed deployment management allows administrators to scale operations while maintaining operational stability

User Access Management and Security Policies

Managing user access and enforcing security policies is critical for maintaining confidentiality and operational control. Administrators must configure role-based access controls, assign permissions appropriately, and integrate authentication mechanisms. This includes setting up user groups, enforcing password policies, and auditing access to detect unauthorized activity. Maintaining strict security standards ensures that sensitive data is protected while enabling authorized personnel to perform necessary tasks efficiently. Security management also contributes to regulatory compliance and overall system integrity

Workflow Automation and Operational Efficiency

Automation improves operational efficiency by reducing manual intervention and standardizing routine tasks. Administrators must configure automated workflows that respond to specific conditions, such as alert triggers, search results, or system thresholds. This may include automated notifications, remediation scripts, or integration with external management systems. Effective automation allows administrators to handle repetitive tasks efficiently, reduce human error, and ensure consistent responses to operational events. Workflow automation enhances overall system reliability and operational agility

Knowledge Object Management and Standardization

Knowledge objects are critical for maintaining consistency in searches, reports, and dashboards. Administrators must create and organize saved searches, macros, event types, and tags to ensure reuse across the environment. Standardizing naming conventions, documenting object usage, and managing updates systematically reduces redundancy and prevents errors. Proper knowledge object management supports collaboration, simplifies maintenance, and enhances the reliability of analytical outputs for all users

Data Normalization and Enrichment Practices

Normalizing and enriching data ensures consistency, accuracy, and context for analysis. Administrators should implement calculated fields, lookups, and standard field mappings using the Common Information Model to standardize events across diverse sources. Enrichment adds meaningful context, improving the quality of reports, dashboards, and alerts. Scalable normalization and enrichment processes allow administrators to manage high volumes of data efficiently, ensuring that analytical insights remain reliable and actionable

Dashboard Design and Interactivity

Creating effective dashboards involves combining multiple visualization elements to communicate insights clearly. Administrators should design dashboards that integrate charts, tables, and event panels while maintaining responsiveness in large-scale environments. Interactive features such as drilldowns, dynamic filters, and input controls enable users to explore data in depth and respond to trends quickly. Well-designed dashboards improve operational decision-making and provide stakeholders with actionable insights without compromising system performance

Alerting and Proactive Incident Management

Configuring alerts and incident management workflows is essential for proactive system monitoring. Administrators must define conditions, thresholds, and schedules that trigger notifications or automated actions. Alerts should be designed to minimize false positives while ensuring timely response to critical events. Integration with automated workflows and external systems allows for immediate remediation and operational continuity. Effective alerting supports rapid problem resolution, reduces downtime, and maintains reliability across the environment

Indexing Strategies and Data Retention

Efficient indexing and retention strategies are crucial for managing system performance and storage. Administrators must configure indexes appropriately, manage retention policies, and ensure replication to maintain data integrity. Understanding frozen, archived, and active data states enables optimized storage usage and ensures that critical data remains accessible. Advanced indexing practices support rapid search execution, high availability, and long-term scalability, allowing Splunk environments to handle increasing data volumes without performance degradation

Performance Tuning for Enterprise Environments

Performance tuning involves optimizing both infrastructure and search operations to maintain responsiveness. Administrators must monitor system resource utilization, balance workloads, and adjust configurations for indexing and searching efficiency. Techniques such as summary indexing, report acceleration, and efficient query design reduce latency and improve user experience. Regular tuning ensures that Splunk environments can support high concurrency, large datasets, and complex analytical operations effectively

Event Correlation and Predictive Insights

Event correlation allows administrators to identify patterns, trends, and anomalies across multiple data sources. By analyzing correlated events, administrators can detect operational risks, predict failures, and implement proactive measures. Predictive insights support informed decision-making, resource optimization, and early issue resolution. Candidates must design searches, alerts, and dashboards that leverage correlation and predictive analytics to enhance situational awareness and operational intelligence

Backup, Recovery, and Contingency Planning

Robust backup and recovery procedures ensure data integrity and operational continuity. Administrators must implement systematic backup processes for indexes, configurations, and system files. Contingency planning involves defining recovery procedures, testing restoration processes, and ensuring minimal downtime in case of failures. Effective backup and recovery strategies safeguard critical data, maintain business continuity, and enhance overall system resilience

Integration with Enterprise Operations

Integrating Splunk with enterprise operations tools enhances the platform’s value. Administrators should configure connections with ticketing systems, notification services, and operational scripts. Integration enables automated responses, centralized monitoring, and streamlined incident resolution. Properly configured integrations allow Splunk to function as a central intelligence hub, providing actionable insights and facilitating coordinated operational workflows across the organization

Scaling Administrative Practices

As deployments grow, administrators must scale operational processes to maintain efficiency and consistency. This includes standardizing user management, knowledge object usage, monitoring, and troubleshooting practices. Scalable administrative practices ensure uniform performance, secure access, and maintainability across distributed environments. Implementing repeatable processes reduces errors, simplifies maintenance, and supports rapid adaptation to growing operational demands

Reporting and Analytical Excellence

Administrators must develop advanced reporting capabilities to provide stakeholders with actionable insights. Combining multiple searches, statistical functions, and visualizations enables comprehensive analysis of operational data. Reports should highlight trends, identify anomalies, and support decision-making. Analytical excellence ensures that operational data drives informed actions, enhances monitoring capabilities, and maximizes the strategic value of Splunk deployments

Continuous Monitoring and Process Improvement

Ongoing monitoring and continuous process improvement are essential for sustaining optimal performance. Administrators should track key performance indicators, analyze operational trends, and implement configuration adjustments to enhance indexing, searching, and dashboard performance. Continuous improvement practices maintain system efficiency, prevent degradation, and ensure that operational processes remain aligned with organizational objectives

Scenario-Based Operational Expertise

The SPLK-1003 exam emphasizes the application of knowledge in realistic scenarios. Administrators must be able to perform deployment, configuration, monitoring, troubleshooting, and user management tasks under simulated operational conditions. Scenario-based practice reinforces theoretical understanding, develops problem-solving skills, and ensures administrators can apply their expertise effectively in complex, real-world environments

Proactive Health Checks and Maintenance

Routine health checks and proactive maintenance are critical for system stability. Administrators must monitor indexing queues, search performance, forwarder connectivity, and resource utilization. Proactive interventions prevent potential issues, maintain uptime, and ensure consistent access to critical data. Regular maintenance activities, including system updates, backups, and configuration validation, support long-term operational reliability

Strategic Data Management

Advanced data management practices involve structuring indexes, optimizing retention policies, and implementing replication and archiving strategies. Administrators must balance storage efficiency with accessibility, ensuring that high-priority data remains quickly retrievable. Strategic data management supports performance, reduces storage costs, and enables long-term analytical capabilities across growing datasets

Knowledge Object Governance

Proper governance of knowledge objects ensures consistency, reliability, and maintainability across the environment. Administrators must implement structured processes for creating, updating, and documenting saved searches, macros, tags, and event types. Effective governance reduces errors, simplifies maintenance, and ensures that dashboards, alerts, and reports function reliably across distributed deployments

Integrating Predictive Analytics

Administrators should leverage predictive analytics capabilities to anticipate operational trends and potential failures. By analyzing historical data and identifying patterns, candidates can configure alerts, dashboards, and automated responses to prevent incidents. Predictive analytics enhances proactive monitoring, supports resource planning, and ensures that operations remain efficient and responsive

Operational Continuity and Resilience

Ensuring operational continuity requires integration of monitoring, alerting, backup, recovery, and troubleshooting practices. Administrators must maintain uninterrupted access to critical data, ensure system components function cohesively, and implement redundancy where necessary. Operational resilience minimizes downtime, preserves data integrity, and maintains consistent analytical and monitoring capabilities

Preparing for SPLK-1003 Certification Success

Effective preparation combines theoretical understanding with extensive hands-on experience in deployment, indexing, searching, user and role management, monitoring, troubleshooting, and automation. Practical exercises simulate real-world scenarios, enabling administrators to develop proficiency and confidence. Comprehensive preparation ensures candidates can demonstrate mastery of SPLK-1003 objectives and apply skills effectively in enterprise environments

Continuous Professional Development

Ongoing skill enhancement is critical for maintaining expertise in complex Splunk environments. Administrators should explore advanced features, refine workflows, optimize dashboards, and implement predictive monitoring techniques. Continuous development ensures that SPLK-1003 certified professionals remain capable of managing evolving operational challenges, optimizing system performance, and delivering actionable insights

Conclusion

The SPLK-1003 Splunk Enterprise Certified Admin certification represents a comprehensive validation of an administrator’s ability to manage, optimize, and secure enterprise Splunk environments. Achieving this certification requires a deep understanding of Splunk architecture, deployment strategies, indexing, searching, and user and role management. Administrators must demonstrate proficiency in monitoring system performance, troubleshooting complex issues, implementing high availability and disaster recovery solutions, and optimizing workflows to ensure operational efficiency. The exam emphasizes not only theoretical knowledge but also practical skills, requiring hands-on experience in managing distributed deployments, configuring alerts, and maintaining system health under real-world conditions.

Effective administration of Splunk environments involves more than just installation and configuration. It demands a strategic approach to scaling deployments, managing data flows, and maintaining the integrity and availability of critical information. Administrators must be capable of designing efficient indexing strategies, optimizing searches, and ensuring consistent and reliable access to data for multiple users. Knowledge object management, data normalization, and enrichment practices are essential for producing meaningful insights, supporting analytics, and enabling informed decision-making. In addition, proactive monitoring, predictive analysis, and automation of operational tasks help minimize downtime, prevent failures, and maintain system responsiveness, ensuring that the Splunk environment consistently supports organizational objectives.

Security and access management remain central to the role of a certified administrator. Implementing robust role-based access controls, authentication mechanisms, and auditing practices ensures that sensitive data is protected while allowing authorized users to perform necessary tasks effectively. Administrators must balance operational efficiency with compliance and security requirements, ensuring that the environment adheres to organizational policies and data protection standards. High availability, redundancy, and disaster recovery planning further strengthen the resilience of Splunk deployments, allowing organizations to maintain continuity of operations even under adverse conditions.

Preparation for the SPLK-1003 certification requires a combination of structured learning and practical, scenario-based experience. Candidates must become adept at applying advanced troubleshooting techniques, performance tuning, workflow automation, and predictive monitoring in distributed enterprise environments. Scenario-based practice reinforces understanding, builds confidence, and ensures administrators can respond effectively to real-world operational challenges. By mastering these skills, certified administrators are equipped to optimize system performance, deliver actionable insights, and support business-critical decision-making processes.

Overall, the SPLK-1003 certification equips IT professionals with the knowledge and expertise required to manage enterprise-grade Splunk environments efficiently. Certified administrators play a pivotal role in ensuring system stability, enhancing operational intelligence, and driving organizational success through effective data management and analytics. The certification serves as a benchmark of professional competence, demonstrating the ability to navigate complex deployments, maintain high performance, and implement best practices across all aspects of Splunk administration. Professionals who achieve this certification are recognized for their capability to maximize the operational value of Splunk Enterprise, making them indispensable assets to organizations that rely on data-driven decision-making.

Splunk SPLK-1003 practice test questions and answers, training course, study guide are uploaded in ETE Files format by real users. Study and Pass SPLK-1003 Splunk Enterprise Certified Admin certification exam dumps & practice test questions and answers are to help students.

Exam Comments * The most recent comment are on top

- SPLK-1002 - Splunk Core Certified Power User

- SPLK-5001 - Splunk Certified Cybersecurity Defense Analyst

- SPLK-1003 - Splunk Enterprise Certified Admin

- SPLK-1001 - Splunk Core Certified User

- SPLK-2002 - Splunk Enterprise Certified Architect

- SPLK-4001 - Splunk O11y Cloud Certified Metrics User

- SPLK-3003 - Splunk Core Certified Consultant

- SPLK-3001 - Splunk Enterprise Security Certified Admin

- SPLK-1005 - Splunk Cloud Certified Admin

- SPLK-5002 - Splunk Certified Cybersecurity Defense Engineer

- SPLK-1004 - Splunk Core Certified Advanced Power User

- SPLK-2003 - Splunk SOAR Certified Automation Developer

- SPLK-3002 - Splunk IT Service Intelligence Certified Admin

Purchase SPLK-1003 Exam Training Products Individually

Why customers love us?

What do our customers say?

The resources provided for the Splunk certification exam were exceptional. The exam dumps and video courses offered clear and concise explanations of each topic. I felt thoroughly prepared for the SPLK-1003 test and passed with ease.

Studying for the Splunk certification exam was a breeze with the comprehensive materials from this site. The detailed study guides and accurate exam dumps helped me understand every concept. I aced the SPLK-1003 exam on my first try!

I was impressed with the quality of the SPLK-1003 preparation materials for the Splunk certification exam. The video courses were engaging, and the study guides covered all the essential topics. These resources made a significant difference in my study routine and overall performance. I went into the exam feeling confident and well-prepared.

The SPLK-1003 materials for the Splunk certification exam were invaluable. They provided detailed, concise explanations for each topic, helping me grasp the entire syllabus. After studying with these resources, I was able to tackle the final test questions confidently and successfully.

Thanks to the comprehensive study guides and video courses, I aced the SPLK-1003 exam. The exam dumps were spot on and helped me understand the types of questions to expect. The certification exam was much less intimidating thanks to their excellent prep materials. So, I highly recommend their services for anyone preparing for this certification exam.

Achieving my Splunk certification was a seamless experience. The detailed study guide and practice questions ensured I was fully prepared for SPLK-1003. The customer support was responsive and helpful throughout my journey. Highly recommend their services for anyone preparing for their certification test.

I couldn't be happier with my certification results! The study materials were comprehensive and easy to understand, making my preparation for the SPLK-1003 stress-free. Using these resources, I was able to pass my exam on the first attempt. They are a must-have for anyone serious about advancing their career.

The practice exams were incredibly helpful in familiarizing me with the actual test format. I felt confident and well-prepared going into my SPLK-1003 certification exam. The support and guidance provided were top-notch. I couldn't have obtained my Splunk certification without these amazing tools!

The materials provided for the SPLK-1003 were comprehensive and very well-structured. The practice tests were particularly useful in building my confidence and understanding the exam format. After using these materials, I felt well-prepared and was able to solve all the questions on the final test with ease. Passing the certification exam was a huge relief! I feel much more competent in my role. Thank you!

The certification prep was excellent. The content was up-to-date and aligned perfectly with the exam requirements. I appreciated the clear explanations and real-world examples that made complex topics easier to grasp. I passed SPLK-1003 successfully. It was a game-changer for my career in IT!

gone through each question and one more time learn the topics you’re mistaken in