- Home

- Google Certifications

- Professional Data Engineer Professional Data Engineer on Google Cloud Platform Dumps

Pass Google Professional Data Engineer Exam in First Attempt Guaranteed!

Get 100% Latest Exam Questions, Accurate & Verified Answers to Pass the Actual Exam!

30 Days Free Updates, Instant Download!

Professional Data Engineer Premium Bundle

- Premium File 349 Questions & Answers. Last update: May 14, 2026

- Training Course 201 Video Lectures

- Study Guide 543 Pages

Last Week Results!

Includes question types found on the actual exam such as drag and drop, simulation, type-in and fill-in-the-blank.

Based on real-life scenarios similar to those encountered in the exam, allowing you to learn by working with real equipment.

Developed by IT experts who have passed the exam in the past. Covers in-depth knowledge required for exam preparation.

All Google Professional Data Engineer certification exam dumps, study guide, training courses are Prepared by industry experts. PrepAway's ETE files povide the Professional Data Engineer Professional Data Engineer on Google Cloud Platform practice test questions and answers & exam dumps, study guide and training courses help you study and pass hassle-free!

Certified Success: Step-by-Step to Google Professional Data Engineer Credential

The professional data engineer certification is one of the most sought-after credentials in the field of cloud computing and data engineering. Offered by a leading cloud service provider, this certification validates the knowledge and practical expertise required to design, build, operationalize, secure, and monitor data processing systems. Individuals aiming to become skilled professionals in the modern data-driven ecosystem often pursue this certification to boost their career prospects and gain industry recognition.

This certification focuses on various facets of data engineering, including data architecture, machine learning model deployment, data processing pipelines, and cloud infrastructure optimization. With businesses increasingly relying on data to drive insights and strategic decisions, professionals capable of handling complex data systems are in high demand. Therefore, obtaining this certification can serve as a significant milestone in one’s professional journey.

Importance Of Data Engineering In The Modern Enterprise

Data engineering plays a foundational role in the contemporary business environment. As organizations collect massive amounts of data through digital channels, it becomes essential to process, store, and analyze this information effectively. A data engineer’s role is to ensure the integrity, scalability, and availability of this data for analytical consumption.

Unlike data analysts or data scientists who primarily focus on deriving insights, data engineers are responsible for designing and managing the infrastructure that facilitates this process. Their work enables businesses to extract meaningful information, thereby improving customer engagement, operational efficiency, and strategic planning. The professional data engineer certification prepares individuals to meet these evolving demands by equipping them with industry-relevant skills and hands-on experience.

Role And Responsibilities Of A Certified Data Engineer

A certified professional data engineer is expected to carry out a wide array of responsibilities that align with the goals of the organization. These responsibilities include but are not limited to designing scalable data architectures, integrating various data sources, optimizing performance of data pipelines, and ensuring security and compliance across systems. In addition, these professionals are often tasked with implementing best practices for data governance, managing metadata, and automating workflows.

Certified data engineers also collaborate with data scientists and business analysts to ensure that the data infrastructure meets the analytical needs of the organization. By understanding both the technical and business perspectives, data engineers serve as a bridge between raw data and actionable insights. This blend of skills makes them indispensable in today’s data-centric world.

Overview Of The Professional Data Engineer Exam

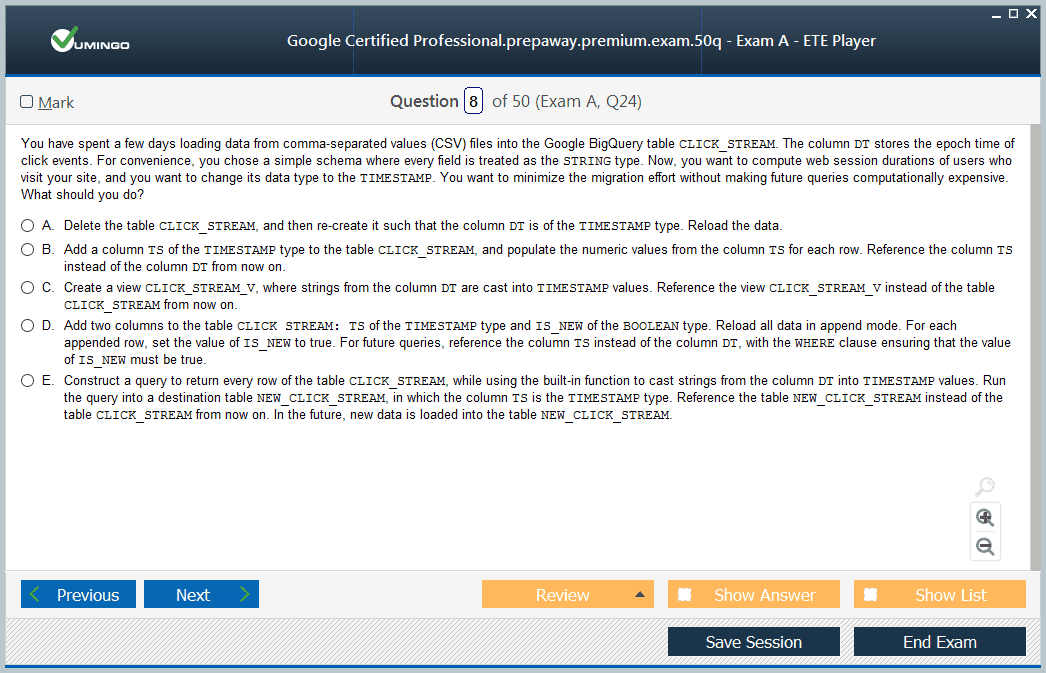

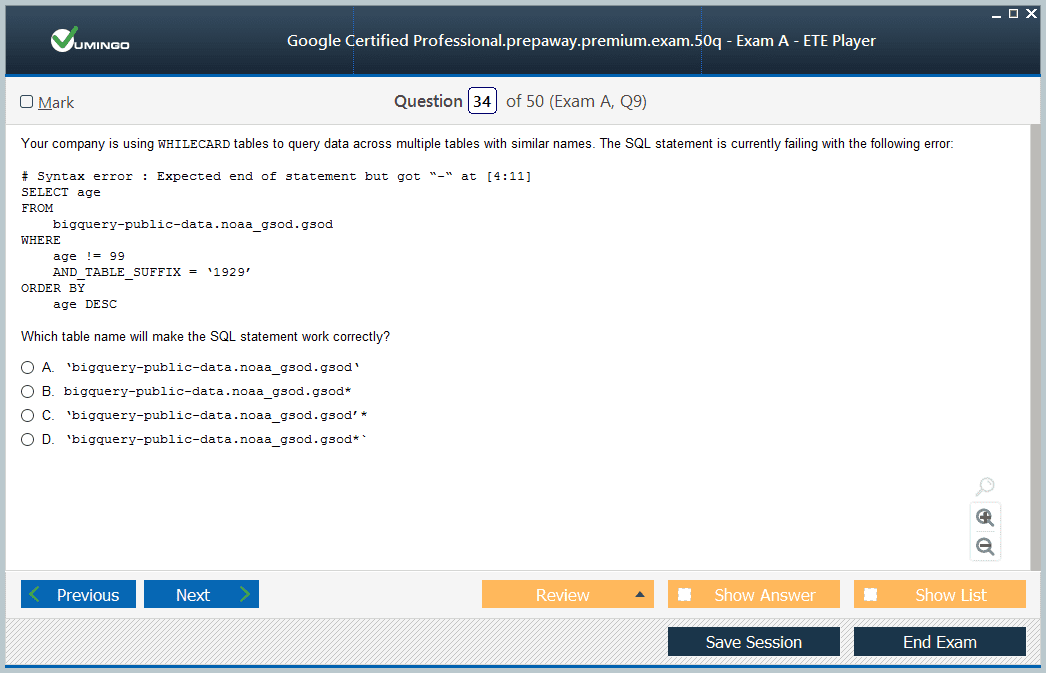

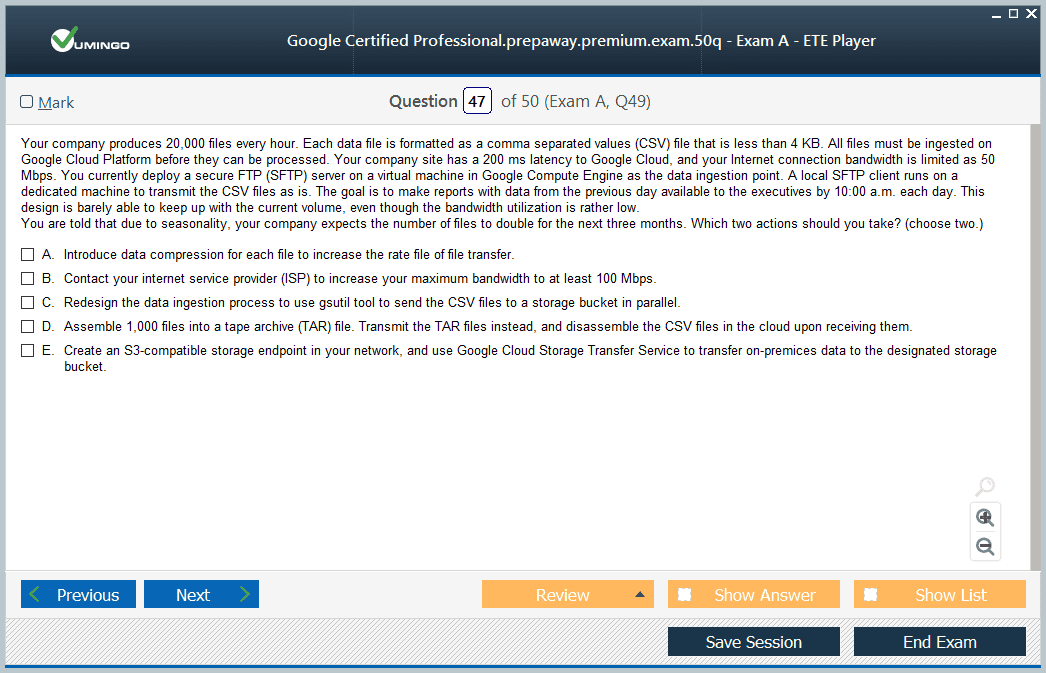

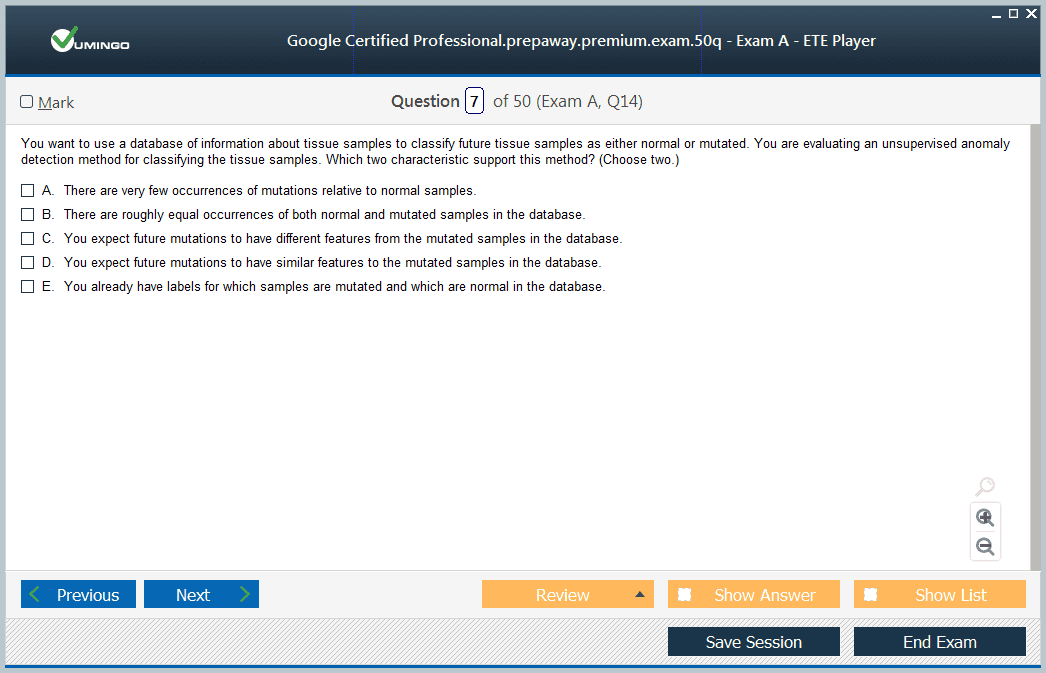

The professional data engineer exam is designed to evaluate a candidate’s ability to design and manage data processing systems on cloud platforms. The exam consists of multiple-choice and multiple-select questions that test the applicant’s understanding of key data engineering concepts. Candidates are expected to have practical knowledge of cloud services, database technologies, and programming languages commonly used in data engineering, such as Python, Java, or Scala.

The exam duration is typically two hours, and it is conducted in a controlled environment either online or at designated testing centers. The exam is offered in different languages, and candidates must select their preferred language at the time of registration. While there are no strict prerequisites for taking the exam, it is recommended that applicants have at least three years of industry experience and one year of hands-on experience with cloud-based data solutions.

Key Domains Covered In The Certification

To successfully pass the professional data engineer exam, candidates must be proficient in a number of core domains. These domains form the foundation of the exam structure and serve as a guide for effective preparation. The major domains include:

Designing data processing systems: This involves selecting appropriate storage technologies, designing efficient data pipelines, and planning data workflows.

Building and operationalizing data processing systems: Candidates must know how to construct robust pipelines, clean and transform data, and ensure real-time or batch processing is optimized.

Operationalizing machine learning models: The certification assesses a candidate’s ability to deploy, manage, and monitor machine learning models in a production environment.

Ensuring solution quality: This domain focuses on system reliability, security, compliance, scalability, and performance monitoring.

These domains represent the essential competencies required to manage end-to-end data engineering projects in a cloud environment.

Skills Acquired Through Certification Preparation

Preparing for the professional data engineer exam is an intensive process that builds a wide array of technical and analytical skills. One of the most significant skills developed is the ability to design data pipelines that can handle various types of data loads and formats. Candidates also learn to optimize storage systems, build real-time processing solutions, and manage data workflows efficiently.

Moreover, candidates gain practical exposure to cloud-based tools and services that are crucial in a modern data engineering toolkit. This includes data warehouse solutions, stream processing engines, and orchestration tools. Additionally, familiarity with data security practices, compliance frameworks, and performance optimization techniques enhances the candidate’s ability to manage complex data ecosystems.

Beyond technical skills, the preparation process also fosters critical thinking, problem-solving, and effective decision-making. These soft skills are invaluable in real-world scenarios where data engineers must adapt to changing requirements and make strategic choices under time constraints.

Benefits Of Becoming A Certified Data Engineer

Earning the professional data engineer certification can unlock numerous career opportunities in both the public and private sectors. As organizations increasingly move their operations to the cloud, the need for qualified data engineers continues to grow. Certified professionals often find roles in industries such as finance, healthcare, retail, and technology, where data plays a central role in decision-making.

One of the primary benefits of certification is enhanced credibility. Employers often use certifications as a benchmark to identify candidates who possess verified skills and knowledge. In many cases, certified professionals also enjoy higher earning potential, more responsibilities, and quicker career progression compared to their non-certified peers.

In addition to individual benefits, organizations also gain from hiring certified professionals. These individuals are better equipped to build scalable and reliable data infrastructure, improve operational efficiency, and reduce downtime through proactive monitoring and troubleshooting.

Challenges Faced During Exam Preparation

While the benefits of obtaining a data engineer certification are substantial, the preparation process is not without its challenges. One of the most common obstacles is the broad range of topics covered in the exam. Candidates often find it difficult to allocate sufficient time to study each domain comprehensively, especially if they are working professionals.

Another challenge is the technical depth required in certain areas. For example, understanding the nuances of machine learning pipelines or stream processing engines demands not only theoretical knowledge but also hands-on experience. Inadequate practice with real-world scenarios can hinder the candidate’s ability to perform well in these sections.

Time management is also a critical factor. The exam includes a variety of questions that require analytical thinking and fast decision-making. Without adequate practice, candidates may struggle to complete the exam within the allotted time. Therefore, a well-structured preparation plan that includes practice tests, lab sessions, and review cycles is essential.

Effective Preparation Strategies

To overcome these challenges, candidates must adopt a structured and disciplined approach to preparation. The first step is to thoroughly review the exam guide to understand the weightage of each domain and prioritize accordingly. Following that, candidates should create a study plan that includes daily goals, weekly assessments, and periodic revisions.

Practical experience is equally important. Setting up a cloud environment and building end-to-end data solutions is a valuable exercise. This hands-on exposure will reinforce theoretical concepts and build confidence. Additionally, reviewing case studies and solving sample problems can provide insights into common patterns and best practices.

Group study sessions and peer discussions can also be beneficial. Interacting with others who are preparing for the exam can help clarify doubts, exchange resources, and maintain motivation. Consistency, persistence, and adaptability are the key traits that contribute to successful certification.

Real-World Applications Of Data Engineering Skills

The skills acquired through certification are not limited to academic or theoretical exercises. They have real-world applications across various industries. For example, in the retail sector, data engineers design systems that track customer behavior and preferences, enabling personalized recommendations and targeted marketing.

In the healthcare industry, data engineers build platforms that aggregate and analyze patient data from multiple sources, helping physicians make informed decisions and improve patient outcomes. Similarly, in finance, data engineering is used to detect fraudulent transactions, optimize trading algorithms, and manage risk more effectively.

The demand for real-time analytics is another area where certified data engineers play a crucial role. Whether it is monitoring sensor data in manufacturing or tracking user interactions in digital platforms, real-time insights are made possible by efficient data pipelines and processing systems designed by skilled engineers.

Understanding Data Engineering Architecture

The foundation of a data engineer's role begins with understanding the architecture that supports data operations. A professional data engineer certification focuses heavily on ensuring candidates are comfortable designing and implementing scalable and secure data architecture on a cloud platform. This includes knowing how data moves across various layers, how it is stored, and how it can be retrieved and processed efficiently.

At its core, data architecture involves data ingestion, processing, storage, and analysis. Candidates must understand the different ingestion methods, such as batch and stream processing, and choose the appropriate one based on the use case. For instance, a real-time analytics application would benefit more from stream processing, while a financial reporting system might rely on batch processing for accuracy and completeness.

Data processing layers can involve transformations, filtering, and aggregation of raw data. These operations are often performed using cloud-native services that provide managed solutions for processing large volumes of data in parallel. Following processing, data is stored in optimized storage systems based on its structure, frequency of access, and purpose. This layered approach forms the backbone of modern data platforms.

Choosing The Right Storage And Processing Technologies

A key part of the professional data engineer certification exam involves evaluating a candidate's ability to choose the right storage and processing technologies. There are various options available for storing structured, semi-structured, and unstructured data, and each serves a different purpose depending on the use case.

For structured data, candidates should know how to implement relational databases or data warehouses that offer strong consistency, optimized queries, and business intelligence support. For semi-structured data such as JSON or XML, document stores are often better suited. Unstructured data, like images or videos, is typically stored in object storage systems optimized for large-scale content.

In terms of processing, data engineers should understand the advantages of both batch and streaming methods. Batch processing is useful for periodic computations over large datasets, while streaming allows for near-real-time analytics. Cloud-native services typically support both modes, and engineers must know how to use them together in hybrid architectures when necessary.

Knowing how to balance cost, performance, and scalability is essential when choosing among these technologies. Improper selection can result in bottlenecks, data loss, or unnecessary expenditure. The exam tests how well candidates can weigh trade-offs and apply best practices in real-world scenarios.

Designing Robust Data Pipelines

A major responsibility of a professional data engineer is designing data pipelines that ensure reliable, efficient, and consistent movement of data from source to destination. These pipelines must be automated to support business continuity and minimize manual intervention. The exam includes questions that test your ability to design pipelines that include data validation, transformation, and delivery.

One of the essential elements in designing robust pipelines is understanding data quality. Engineers must implement checks to detect missing values, duplicates, and inconsistencies. Failure to do so can lead to inaccurate analytics and poor decision-making. The transformation process must also be optimized for performance to ensure that even large datasets can be processed within a reasonable time.

Data lineage and auditability are also key aspects. Knowing the origin of data and how it has been altered over time is important for compliance, debugging, and trust in data. Engineers must integrate metadata tracking and logging mechanisms into pipelines to maintain transparency and facilitate troubleshooting.

Automation tools and orchestration services are used to schedule, monitor, and manage these pipelines. The certification tests familiarity with such tools and the ability to implement them effectively in a production environment.

Implementing Machine Learning In Data Pipelines

While machine learning is often associated with data scientists, data engineers also play a crucial role in operationalizing models. The professional data engineer certification includes a domain focused on deploying and maintaining machine learning pipelines in production.

Data engineers must know how to prepare data for machine learning, including cleaning, normalization, and feature engineering. They are also responsible for setting up environments where models can be trained using scalable computing resources. This might include provisioning virtual machines, using containerization, or employing managed services that automate training tasks.

Once a model is trained, data engineers help deploy it into production systems where it can provide predictions on live data. They must ensure that these deployments are secure, reliable, and maintainable. Monitoring performance, accuracy, and resource usage is essential to ensure the model continues to meet business requirements.

The exam includes questions on model versioning, retraining strategies, and the selection of appropriate infrastructure for training and serving models. Candidates must demonstrate the ability to work alongside data scientists to maintain an end-to-end machine learning lifecycle.

Managing Data Security And Compliance

Security and compliance are critical aspects of data engineering that are covered extensively in the certification. Data breaches can result in legal penalties, reputational damage, and loss of trust. Engineers must therefore understand how to implement encryption, access controls, and secure data transmission mechanisms.

Data must be encrypted both at rest and in transit. Engineers are expected to use managed key services or customer-supplied keys depending on the sensitivity of the data. Role-based access control should be implemented to ensure that only authorized users can access specific datasets.

Compliance with regulations such as data protection acts is another area of focus. Engineers must design systems that enable data deletion, anonymization, and access logging. This is particularly important in industries that handle personal or financial information, such as healthcare or banking.

The certification includes scenarios where candidates must identify potential security risks and recommend mitigation strategies. This might involve securing pipelines, isolating network resources, or implementing monitoring tools to detect unusual activity.

Monitoring And Troubleshooting Data Systems

Maintaining system health is essential for reliable data operations. A data engineer’s job does not end after deployment. Ongoing monitoring, logging, and alerting are vital for ensuring smooth operation of data pipelines and platforms.

Engineers must implement monitoring tools to track data flow, system load, latency, and failure rates. Alerts can be configured to notify administrators of performance degradation or failed jobs. Engineers must also be proficient in interpreting logs to identify and fix issues.

Another important concept is auto-scaling, which allows systems to adjust resource usage based on demand. This reduces costs while maintaining performance. Engineers are expected to know how to configure such features and troubleshoot problems related to scaling.

The certification tests these abilities through case-based questions that require interpreting metrics and recommending actions. Effective monitoring strategies ensure that issues are detected early and resolved before impacting downstream systems or users.

Ensuring Scalability And Efficiency

Designing systems that scale efficiently is another important area covered by the exam. Scalability refers to a system’s ability to handle increasing workloads without significant performance loss. Engineers must understand horizontal and vertical scaling, distributed processing, and load balancing.

Efficiency goes hand-in-hand with scalability. A highly scalable system that is inefficient in resource usage can incur high costs. Engineers must optimize queries, use partitioning strategies, and eliminate bottlenecks to ensure systems run cost-effectively.

Caching mechanisms can be used to store frequently accessed data, reducing the load on databases and improving response time. Materialized views and denormalized tables can also be used to accelerate analytics. Engineers must know when and how to apply these techniques.

Understanding the cost models of various services is important for designing efficient architectures. The exam tests candidates on trade-offs between performance and cost, pushing them to make decisions that benefit both technical performance and business outcomes.

Building Reliable And Portable Systems

Reliability and portability are additional focus areas for certified data engineers. A reliable system continues to function correctly even in the face of failures or unusual conditions. Engineers must design systems with redundancy, failover mechanisms, and data recovery processes.

Portability refers to the ability to move or replicate systems across different environments. This is important for hybrid and multi-cloud strategies. Engineers must understand containerization and infrastructure-as-code tools to create reproducible environments.

Data backup, replication, and disaster recovery are key reliability concerns. Engineers should know how to implement strategies that minimize downtime and data loss. The exam may include questions about designing systems that meet specific reliability requirements.

Portability also involves compatibility with various formats and systems. Engineers must use standard protocols, data models, and configuration strategies to ensure that systems can be reused or migrated easily without major rework.

Integrating With Business Intelligence Tools

Data engineers often collaborate with analysts and decision-makers who use business intelligence tools to generate reports and dashboards. As such, data platforms must be designed to support fast, reliable access to clean and structured data.

Data modeling is an essential skill in this context. Engineers must design schemas that are intuitive and performant. Fact and dimension tables, star schemas, and normalized designs are concepts that candidates must be comfortable with.

Engineers must also implement access layers that support role-based queries and maintain data governance standards. This ensures data consistency and security across the organization. By integrating well with business tools, engineers empower teams to derive insights that guide strategic decisions.

The certification exam may present scenarios that require understanding how data infrastructure supports business intelligence workflows. Engineers must know how to optimize systems to deliver low-latency, high-reliability access to data consumers.

Advancing Your Career With Certification

Achieving the professional data engineer certification is a milestone that signals readiness to take on complex data challenges. The credential enhances your credibility and makes you a valuable asset to employers seeking skilled professionals capable of managing cloud-based data systems.

Certified engineers are often given responsibilities that involve architectural planning, platform migration, and team leadership. The certification acts as a differentiator in the job market and can lead to promotions, salary increases, and broader project involvement.

Beyond career growth, certification provides a structured learning path that exposes candidates to best practices, emerging technologies, and industry trends. It is a worthwhile investment for anyone serious about a long-term career in data engineering.

Exploring Real-Time Data Processing Systems

Real-time data processing is a significant area of focus for professional data engineers. As businesses increasingly rely on up-to-the-minute information to make decisions, engineers must design systems that can process and analyze data as it is generated. This requires knowledge of tools and architectures specifically built for stream processing.

In a real-time architecture, data is ingested continuously from sources such as sensors, web logs, or application events. Engineers must implement stream processing frameworks that can transform, enrich, and aggregate data as it flows. These systems are designed to process events with minimal latency and deliver insights that drive immediate action.

Data engineers must also be aware of the trade-offs between throughput, latency, and accuracy. While real-time systems prioritize speed, some applications may still require exactly-once processing semantics to avoid duplication or loss. Understanding how to tune systems for various consistency levels is essential for success in this domain.

The exam tests a candidate’s ability to identify suitable use cases for real-time processing and to design systems that scale appropriately. Engineers must also integrate monitoring tools to detect delays, backpressure, or data anomalies in real-time.

Working With Structured And Unstructured Data

Professional data engineers deal with various types of data, including structured, semi-structured, and unstructured formats. Structured data, such as that found in relational databases, follows a defined schema and is easily queried. Engineers must design schemas that optimize query performance and storage efficiency.

Semi-structured data includes formats like JSON, XML, and CSV, which have some structure but are not rigid. These formats are common in modern data exchange systems and require flexible storage and processing mechanisms. Engineers must understand how to ingest and parse these formats efficiently and how to convert them into structured representations for analytics.

Unstructured data includes images, videos, audio files, and documents. Managing this type of data requires object storage systems that support metadata tagging and indexing. Engineers must know how to design scalable architectures that support high-throughput ingestion and retrieval.

The certification exam includes scenarios that require working with all three types of data. Engineers must know how to integrate them into a cohesive data platform that serves both operational and analytical needs.

Implementing Batch Processing Workflows

Batch processing remains a core component of many data engineering tasks. It involves collecting and processing data in fixed intervals rather than in real time. Common examples include nightly ETL jobs, daily reports, or periodic data consolidation.

Engineers must design workflows that are resilient, scalable, and maintainable. This includes defining task dependencies, scheduling jobs, and handling failures. Orchestration tools help manage these workflows by providing logging, retries, and alerting mechanisms.

One challenge in batch processing is handling large volumes of data efficiently. Engineers must design pipelines that distribute processing across multiple nodes and avoid bottlenecks. Partitioning, parallelization, and caching strategies are essential for performance.

The professional data engineer exam tests knowledge of designing batch systems that meet performance, cost, and reliability targets. Engineers must understand how to select the right tools and design workflows that minimize runtime while maximizing data integrity.

Using Metadata For Data Governance

Metadata plays a critical role in managing and governing data platforms. It includes information about the origin, structure, quality, and usage of data. Engineers must design systems that collect and maintain metadata to support compliance, auditing, and operational efficiency.

One key aspect of metadata management is data lineage. This shows how data flows from source to destination, including all transformations along the way. Engineers must implement tools that automatically capture lineage information as data moves through pipelines.

Metadata also supports data discovery. By tagging datasets with business terms, descriptions, and classifications, users can more easily find and understand the data they need. Engineers must integrate catalog systems that support this functionality.

In regulated industries, metadata is essential for proving compliance with data protection laws. Engineers must ensure that metadata includes access logs, data retention policies, and security classifications. The exam evaluates a candidate’s ability to implement metadata strategies that align with organizational goals.

Handling Data Migration Projects

Data engineers are often involved in migrating systems from legacy platforms to modern cloud-based architectures. This process includes assessing the current environment, designing a target architecture, and implementing migration strategies that minimize downtime and data loss.

A successful migration project starts with data profiling and assessment. Engineers must understand the size, structure, and quality of existing data to plan the appropriate transfer methods. This might include export-import operations, replication, or custom scripts.

During migration, engineers must validate that data remains consistent and complete. This includes checksum comparisons, row counts, and data sampling. Engineers must also address schema differences, format conversions, and permission mapping.

The certification exam includes scenarios where candidates must recommend migration paths and tools. They must also understand how to monitor the process, handle failures, and roll back changes if needed. This ensures business continuity and data reliability throughout the migration.

Developing Scalable Data Models

Data modeling is a foundational skill for data engineers. It involves designing schemas that represent business concepts in a way that supports performance and maintainability. Engineers must balance normalization for storage efficiency with denormalization for query performance.

Star and snowflake schemas are common in analytical databases. These models separate facts from dimensions and support efficient aggregation and filtering. Engineers must know how to implement these models using cloud-native tools.

In operational systems, entity-relationship models are used to capture real-world objects and their interactions. Engineers must ensure referential integrity, enforce constraints, and support transactional workloads.

The professional data engineer exam tests the ability to design data models that support both operational and analytical needs. Candidates must also understand how changes to the model affect downstream systems, including dashboards, reports, and machine learning models.

Automating Infrastructure With Code

Infrastructure as code is a modern approach that allows engineers to define and manage resources using configuration files. This approach improves consistency, repeatability, and version control across environments. Data engineers must be familiar with tools that support infrastructure automation.

By using templates or declarative scripts, engineers can provision databases, storage systems, processing engines, and access policies. This allows teams to deploy environments quickly and avoid configuration drift.

Infrastructure as code also supports testing and auditing. Engineers can validate configurations before deployment and track changes over time. This is especially important in environments with strict compliance requirements.

The certification exam may present scenarios that require defining infrastructure as code strategies. Candidates must understand how to manage dependencies, handle secrets, and roll out updates with minimal disruption.

Supporting Data Science And Analytics Teams

Data engineers play a crucial role in supporting data scientists and analysts. They build the platforms and pipelines that feed clean, reliable data into models, dashboards, and reports. Collaboration between teams is essential to align infrastructure with analytical goals.

One way engineers support analysts is by building curated datasets. These are pre-aggregated, cleaned, and documented tables that reduce the complexity of working with raw data. Engineers must work with domain experts to understand what metrics and dimensions are most useful.

Engineers also support reproducibility. By versioning datasets, documenting transformations, and logging pipeline runs, they ensure that analysis can be replicated and audited. This increases trust in data and accelerates insight generation.

The exam includes questions that test understanding of how to design systems that support downstream users. Engineers must ensure that systems are scalable, secure, and easy to use across different analytical tools.

Ensuring Cost Efficiency In Data Platforms

Cloud-based data systems offer flexibility and scalability, but they also introduce cost considerations. Engineers must design systems that minimize waste and align with budget constraints. Cost efficiency is a key theme in the certification.

One way to reduce costs is by choosing the right storage tier. Cold storage is cheaper but slower, while hot storage is more expensive but provides low-latency access. Engineers must understand access patterns to make informed decisions.

Compute costs can be optimized by using autoscaling, spot instances, and serverless architectures. Engineers must monitor usage patterns and adjust configurations to match demand. Query optimization also reduces compute usage by avoiding unnecessary processing.

The certification tests knowledge of pricing models and cost management tools. Candidates must understand how to design systems that meet performance goals without exceeding budget limits.

Building Event-Driven Data Architectures

Event-driven architectures are a modern design pattern that supports real-time responsiveness and scalability. In this model, systems react to events such as user actions, sensor updates, or data changes. Engineers must design pipelines that are triggered by these events and process them asynchronously.

Event-driven systems are often built using message queues or event streams. Engineers must configure producers and consumers, handle ordering guarantees, and implement retry mechanisms for failed messages.

These architectures are highly decoupled, allowing teams to build independent services that scale independently. Engineers must understand how to design systems that use event-based triggers to initiate data processing, model updates, or downstream notifications.

The exam includes scenarios where event-driven architecture is the best choice. Candidates must evaluate when to use this model and how to implement it using cloud-native services and best practices.

Applying Observability And Debugging Techniques

Observability is the practice of understanding system behavior through logs, metrics, and traces. Data engineers must implement observability strategies to detect problems, optimize performance, and meet service level objectives.

Metrics include data like processing time, error rates, and resource usage. Engineers must configure dashboards and alerts that highlight anomalies. Logs provide detailed records of system activity, which are essential for debugging and auditing.

Traces allow engineers to follow a request as it moves through multiple services. This is especially useful in distributed systems where problems may occur across components.

The certification assesses candidates' ability to design systems that are observable and easy to troubleshoot. Engineers must implement logging strategies, structure logs for searchability, and integrate tools that support real-time monitoring and alerting.

Promoting Data Literacy And Collaboration

As data becomes central to all parts of a business, promoting data literacy is essential. Engineers play a role by creating systems that are accessible, well-documented, and aligned with business needs.

This includes maintaining data dictionaries, documentation, and training materials. Engineers must also ensure that data systems support role-based access and comply with governance policies.

Collaboration with stakeholders is essential. Engineers must gather requirements, explain technical constraints, and support exploratory analysis. This helps align infrastructure with strategic goals and empowers users to make informed decisions.

The professional data engineer exam includes questions that test understanding of these interpersonal and organizational skills. Successful candidates know how to bridge the gap between technical systems and business users.

Understanding Data Privacy And Ethical Considerations

As data engineers build and manage systems that process sensitive information, understanding data privacy and ethics becomes essential. The professional data engineer certification emphasizes the importance of designing systems that respect user privacy and comply with legal requirements.

Data privacy includes implementing techniques like data masking, anonymization, and encryption to protect personally identifiable information. Engineers must design pipelines that minimize exposure to sensitive data while ensuring data usability for analytics.

Ethical considerations extend beyond compliance. Engineers should advocate for transparency in data collection and usage, avoiding bias in data processing, and ensuring fairness in automated decision-making systems. The exam assesses candidates’ awareness of these principles and their ability to implement practical solutions.

Designing For Disaster Recovery And Business Continuity

Data systems must be resilient to failures caused by hardware issues, software bugs, or natural disasters. A professional data engineer must design architectures that support disaster recovery and business continuity.

This involves creating backup strategies that ensure data can be restored to a consistent state after a failure. Backup frequency, retention policies, and storage locations must be planned carefully to balance cost and recovery objectives.

Redundancy and failover mechanisms are also crucial. Engineers should design systems that can automatically switch to standby resources without disrupting operations. Multi-region deployments can help achieve higher availability and fault tolerance.

The certification exam tests knowledge of recovery point objectives, recovery time objectives, and how to implement these in cloud environments. Candidates should be able to design systems that meet organizational uptime requirements and minimize data loss.

Optimizing Data Processing With Parallelism

Handling large-scale data efficiently often requires parallel processing techniques. Data engineers must know how to break down tasks into smaller chunks that can be executed concurrently to reduce overall runtime.

Parallelism can be applied at multiple levels, including data partitioning, task scheduling, and distributed computing. Tools like distributed data processing frameworks support these approaches by managing resource allocation and job coordination.

Understanding the underlying infrastructure is key to optimizing parallelism. Engineers should know how to avoid data skew, manage dependencies, and balance load across compute nodes to prevent bottlenecks.

The exam evaluates a candidate’s ability to design parallel processing workflows that maximize throughput and minimize latency while keeping costs under control.

Leveraging Cloud-Native Data Services

Cloud providers offer a rich ecosystem of managed data services that simplify building scalable and reliable data platforms. The professional data engineer certification expects candidates to be familiar with these services and how to integrate them effectively.

Cloud-native services include managed databases, data lakes, streaming platforms, orchestration tools, and machine learning APIs. Engineers must understand service limits, pricing models, and best practices for security and performance.

Choosing the right combination of services depends on workload requirements and organizational goals. Engineers must evaluate trade-offs and design architectures that leverage cloud-native advantages such as elasticity, automation, and global reach.

The exam tests how well candidates can map business needs to cloud service capabilities and optimize deployment for scalability, cost, and reliability.

Implementing Data Catalogs And Self-Service Platforms

As data ecosystems grow, maintaining visibility and accessibility becomes a challenge. Data catalogs and self-service platforms help users discover, understand, and use data effectively without overburdening engineering teams.

Data catalogs store metadata, including dataset descriptions, lineage, owners, and usage statistics. They provide search and filtering capabilities that enable users to find relevant data quickly.

Self-service platforms allow users to run queries, generate reports, and create dashboards without needing deep technical expertise. Engineers must ensure these platforms are secure, performant, and integrated with data governance policies.

The certification exam covers the design and deployment of such platforms, emphasizing usability, governance, and scalability. Candidates should demonstrate knowledge of tools and techniques that empower data consumers.

Managing Data Quality Through Automated Testing

Data quality issues can severely impact analytics and decision-making. Professional data engineers must implement automated testing to catch errors early in the data pipeline.

Automated data tests can check for schema conformance, null values, duplicates, and statistical anomalies. These tests run regularly and alert engineers to potential issues before data reaches users.

Continuous integration and deployment practices extend to data pipelines, ensuring that changes are tested thoroughly. Engineers must design tests that are comprehensive yet efficient to avoid slowing down pipeline execution.

The exam assesses familiarity with testing frameworks and the ability to incorporate testing into pipeline workflows. Maintaining high data quality reduces operational risk and increases trust in data products.

Utilizing Data Encryption And Key Management

Protecting data confidentiality requires strong encryption both in transit and at rest. Data engineers must implement encryption protocols that meet organizational and regulatory requirements.

Managing encryption keys securely is a critical component. Engineers should use key management services that support rotation, auditing, and access controls. Keys must be protected from unauthorized access to prevent data breaches.

The certification tests understanding of cryptographic concepts and cloud provider tools. Candidates should know how to integrate encryption seamlessly into data storage and processing workflows.

Building Modular And Reusable Pipeline Components

Modularity enhances maintainability and scalability in data engineering. By designing reusable components, engineers can accelerate development and reduce errors.

Pipeline components such as ingestion modules, transformation functions, and validation routines should be designed to work independently and be configurable.

Using a modular design allows teams to test and update components without impacting the entire pipeline. It also promotes consistency across projects by reusing proven patterns.

The exam evaluates how well candidates can design modular pipelines and incorporate automation tools to manage dependencies and deployments.

Applying Data Partitioning And Sharding Techniques

Partitioning and sharding improve performance and scalability by dividing data into manageable chunks. Partitioning is typically based on data attributes such as date or region, while sharding distributes data across multiple databases or nodes.

Effective partitioning reduces query latency by limiting the data scanned. Sharding supports horizontal scaling by distributing load and storage across clusters.

Engineers must understand the implications of partition keys on data distribution and query patterns. Choosing poor partition keys can lead to hotspots and uneven workloads.

The professional data engineer exam includes questions on designing partitioning strategies that balance performance and maintainability.

Designing Secure Data Access And Authentication

Securing data access involves managing authentication, authorization, and auditing. Engineers must implement role-based access control systems that enforce least privilege principles.

Authentication mechanisms include identity federation, multi-factor authentication, and integration with corporate directories. Engineers should design solutions that support seamless yet secure access.

Auditing access logs is essential for detecting unauthorized activities and supporting compliance. Engineers must ensure that logs are tamper-resistant and stored securely.

The certification tests knowledge of secure access frameworks and best practices for cloud environments. Candidates must design solutions that protect data while enabling legitimate usage.

Monitoring Data Pipeline Health And Performance

Continuous monitoring ensures that data pipelines operate smoothly and deliver data on time. Engineers should configure monitoring tools that track job success rates, latency, throughput, and resource utilization.

Alerting mechanisms notify teams of anomalies, failures, or performance degradation. Engineers must respond quickly to minimize impact and diagnose root causes.

Observability best practices include correlating logs with metrics and using distributed tracing to understand complex workflows.

The professional data engineer exam assesses ability to implement comprehensive monitoring solutions that support proactive maintenance and rapid troubleshooting.

Handling Schema Evolution And Data Versioning

Data schemas often evolve as business requirements change. Engineers must design pipelines that handle schema changes gracefully without breaking downstream systems.

Techniques include schema versioning, backward and forward compatibility, and schema registries that manage definitions centrally.

Data versioning tracks changes to datasets over time, enabling reproducibility and auditing. Engineers should design systems that support easy rollback and comparison of data versions.

The exam covers best practices for managing schema and data versions in dynamic environments.

Integrating Data From Multiple Sources

Modern data platforms aggregate data from diverse sources such as relational databases, APIs, files, and streaming systems. Engineers must design ingestion pipelines that handle varying formats, update frequencies, and reliability levels.

Integration challenges include schema harmonization, deduplication, and latency management. Engineers must implement data validation and transformation steps to unify disparate data.

The professional data engineer certification tests ability to design robust integration workflows that support analytical and operational use cases.

Using Machine Learning Pipelines For Automation

Data engineers are increasingly responsible for building machine learning pipelines that automate data preprocessing, training, and deployment.

These pipelines must be scalable, repeatable, and monitored for model performance and drift.

Engineers must collaborate with data scientists to operationalize models, manage feature stores, and integrate model outputs into business systems.

The exam assesses knowledge of tools and practices for building and maintaining machine learning workflows in production.

Optimizing Query Performance For Analytics

Efficient query execution improves the responsiveness of analytics platforms. Engineers must optimize data layouts, indexing strategies, and query plans.

Techniques include partition pruning, predicate pushdown, and materialized views. Engineers must also be familiar with caching strategies and query federation.

The certification tests the ability to diagnose performance issues and implement optimizations that meet business SLAs.

Supporting Data Lineage And Impact Analysis

Understanding the origin and impact of data changes is vital for governance and troubleshooting. Data lineage tools capture the flow of data through transformations and systems.

Impact analysis helps assess how changes in data or schema affect downstream reports and applications.

Engineers must design systems that capture and visualize lineage information, enabling data users to trust and understand their data sources.

The exam includes scenarios that require designing lineage and impact analysis solutions aligned with organizational policies.

Promoting Continuous Learning And Adaptability

The data engineering field evolves rapidly with new tools, frameworks, and best practices emerging frequently. Professionals must commit to continuous learning to stay current.

Certification itself is part of this learning journey, helping engineers validate skills and identify knowledge gaps.

Employers value engineers who can adapt to change, adopt new technologies, and drive innovation within their teams.

The professional data engineer exam encourages a mindset of growth and lifelong learning.

Conclusion

Becoming a professional data engineer requires mastering a broad range of skills, tools, and concepts. The data engineer certification exam tests not only technical knowledge but also the ability to design and operate scalable, secure, and efficient data platforms that meet business needs. Throughout the journey, candidates must demonstrate expertise in areas such as real-time and batch processing, data modeling, pipeline automation, cloud services, and data governance.

A key takeaway is the importance of understanding the entire data lifecycle—from ingestion and processing to storage, analysis, and security. Data engineers must be comfortable working with diverse data types, including structured, semi-structured, and unstructured formats. They should also be skilled in designing data models that balance query performance with maintainability and storage efficiency.

Modern data engineering increasingly relies on cloud-native tools and managed services that offer elasticity and automation. Candidates need to evaluate trade-offs between cost, performance, and reliability when selecting technologies and architectures. Additionally, automating infrastructure and pipeline deployments through infrastructure as code and continuous integration helps improve system consistency and reduces manual errors.

Security and compliance are foundational concerns. Data engineers must implement strong encryption, access controls, and auditing to protect sensitive information. At the same time, they should embed privacy and ethical principles into system designs, ensuring that data usage aligns with regulatory requirements and organizational values.

Observability and monitoring enable proactive identification of issues and quick resolution. Building modular, reusable pipeline components supports scalability and maintainability, allowing teams to iterate and improve systems efficiently. Managing schema evolution and data versioning ensures that pipelines remain robust in dynamic environments.

Supporting downstream users such as data scientists, analysts, and business stakeholders is also vital. Creating curated datasets, metadata catalogs, and self-service platforms empowers these users while maintaining governance standards. Collaboration and communication between technical and non-technical teams enhance data literacy and drive better business outcomes.

The exam also highlights the growing role of data engineers in machine learning workflows, where automating data preparation and model deployment is becoming commonplace. Engineers must be adaptable and committed to continuous learning, as the landscape evolves rapidly with new technologies and methodologies emerging regularly.

In summary, the professional data engineer certification is more than a test of technical skills; it assesses a holistic understanding of data systems and their role within organizations. Success requires a balance of hands-on expertise, strategic thinking, and ethical awareness. Engineers who master these areas contribute significantly to their organizations by delivering reliable, secure, and scalable data solutions that unlock insights and support informed decision-making.

Preparing for the exam reinforces these competencies and builds confidence in handling real-world challenges. Whether designing complex data pipelines, optimizing cloud resources, or ensuring data quality, certified data engineers are equipped to meet the demands of modern data-driven enterprises. This certification serves as a valuable credential that demonstrates professionalism and a commitment to excellence in the fast-growing field of data engineering.

Google Professional Data Engineer practice test questions and answers, training course, study guide are uploaded in ETE Files format by real users. Study and Pass Professional Data Engineer Professional Data Engineer on Google Cloud Platform certification exam dumps & practice test questions and answers are to help students.

Exam Comments * The most recent comment are on top

- Professional Cloud Architect - Google Cloud Certified - Professional Cloud Architect

- Generative AI Leader

- Professional Machine Learning Engineer

- Professional Data Engineer - Professional Data Engineer on Google Cloud Platform

- Associate Cloud Engineer

- Cloud Digital Leader

- Professional Cloud Network Engineer

- Professional Cloud DevOps Engineer

- Professional Security Operations Engineer

- Professional Cloud Security Engineer

- Associate Google Workspace Administrator

- Professional Cloud Database Engineer

- Professional Cloud Developer

- Associate Data Practitioner - Google Cloud Certified - Associate Data Practitioner

- Professional ChromeOS Administrator

- Professional Google Workspace Administrator

- Professional Chrome Enterprise Administrator

Purchase Professional Data Engineer Exam Training Products Individually

Why customers love us?

What do our customers say?

The resources provided for the Google certification exam were exceptional. The exam dumps and video courses offered clear and concise explanations of each topic. I felt thoroughly prepared for the Professional Data Engineer test and passed with ease.

Studying for the Google certification exam was a breeze with the comprehensive materials from this site. The detailed study guides and accurate exam dumps helped me understand every concept. I aced the Professional Data Engineer exam on my first try!

I was impressed with the quality of the Professional Data Engineer preparation materials for the Google certification exam. The video courses were engaging, and the study guides covered all the essential topics. These resources made a significant difference in my study routine and overall performance. I went into the exam feeling confident and well-prepared.

The Professional Data Engineer materials for the Google certification exam were invaluable. They provided detailed, concise explanations for each topic, helping me grasp the entire syllabus. After studying with these resources, I was able to tackle the final test questions confidently and successfully.

Thanks to the comprehensive study guides and video courses, I aced the Professional Data Engineer exam. The exam dumps were spot on and helped me understand the types of questions to expect. The certification exam was much less intimidating thanks to their excellent prep materials. So, I highly recommend their services for anyone preparing for this certification exam.

Achieving my Google certification was a seamless experience. The detailed study guide and practice questions ensured I was fully prepared for Professional Data Engineer. The customer support was responsive and helpful throughout my journey. Highly recommend their services for anyone preparing for their certification test.

I couldn't be happier with my certification results! The study materials were comprehensive and easy to understand, making my preparation for the Professional Data Engineer stress-free. Using these resources, I was able to pass my exam on the first attempt. They are a must-have for anyone serious about advancing their career.

The practice exams were incredibly helpful in familiarizing me with the actual test format. I felt confident and well-prepared going into my Professional Data Engineer certification exam. The support and guidance provided were top-notch. I couldn't have obtained my Google certification without these amazing tools!

The materials provided for the Professional Data Engineer were comprehensive and very well-structured. The practice tests were particularly useful in building my confidence and understanding the exam format. After using these materials, I felt well-prepared and was able to solve all the questions on the final test with ease. Passing the certification exam was a huge relief! I feel much more competent in my role. Thank you!

The certification prep was excellent. The content was up-to-date and aligned perfectly with the exam requirements. I appreciated the clear explanations and real-world examples that made complex topics easier to grasp. I passed Professional Data Engineer successfully. It was a game-changer for my career in IT!