- Home

- F5 Certifications

- 101 Application Delivery Fundamentals Dumps

Pass F5 101 Exam in First Attempt Guaranteed!

Get 100% Latest Exam Questions, Accurate & Verified Answers to Pass the Actual Exam!

30 Days Free Updates, Instant Download!

101 Premium Bundle

- Premium File 460 Questions & Answers. Last update: May 10, 2026

- Training Course 132 Video Lectures

- Study Guide 423 Pages

Last Week Results!

Includes question types found on the actual exam such as drag and drop, simulation, type-in and fill-in-the-blank.

Based on real-life scenarios similar to those encountered in the exam, allowing you to learn by working with real equipment.

Developed by IT experts who have passed the exam in the past. Covers in-depth knowledge required for exam preparation.

All F5 101 certification exam dumps, study guide, training courses are Prepared by industry experts. PrepAway's ETE files povide the 101 Application Delivery Fundamentals practice test questions and answers & exam dumps, study guide and training courses help you study and pass hassle-free!

F5 101 Application Delivery Fundamentals: Ultimate Professional Certification Manual

The F5 101 Application Delivery Fundamentals certification stands as the entry point into the F5 Networks professional certification ecosystem, establishing a verified baseline of knowledge about application delivery concepts, networking fundamentals, and the core technologies that F5 products are built upon. F5 Networks has occupied a dominant position in the application delivery controller market for decades, and its products including the BIG-IP platform, NGINX, and cloud-based services are deployed in some of the world's most demanding enterprise, government, and service provider environments. Earning the 101 certification signals to employers and clients that a professional has moved beyond casual familiarity with F5 technology and can demonstrate a structured, tested understanding of the foundational concepts that underpin the entire F5 product portfolio.

What makes the F5 101 particularly valuable as an entry-level credential is the breadth of networking and application delivery knowledge it validates. Unlike vendor certifications that focus narrowly on product configuration procedures, the 101 examination tests genuine comprehension of the protocols, architectures, and principles that application delivery controllers are designed to address. A candidate who passes the F5 101 understands not just what F5 products do but why they are needed, what problems they solve, and how the underlying technologies work at a level that supports informed decision-making about application delivery architecture. This conceptual depth distinguishes the credential from simpler product familiarity certifications and gives it lasting relevance even as specific product versions evolve.

The History of F5 Networks and How the Certification Program Developed

F5 Networks was founded in Seattle in 1996 with an initial focus on load balancing technology at a time when the explosive growth of the internet was creating unprecedented demand for tools that could distribute traffic across multiple servers and keep web applications available under heavy load. The company's early BIG-IP products established a reputation for reliability and performance that grew steadily through the late 1990s and 2000s as F5 expanded its product capabilities from basic load balancing into a comprehensive application delivery platform encompassing traffic management, security, access control, and application optimization. This expansion of product scope was paralleled by the development of a formal certification program designed to validate the knowledge required to work effectively with increasingly complex F5 deployments.

The F5 certification program was structured deliberately to create a progression from foundational knowledge through increasingly specialized technical expertise. The 101 examination serves as the common foundation that all F5 certification pathways require candidates to pass before pursuing higher-level credentials in specific technology tracks including Local Traffic Manager, DNS, Access Policy Manager, Advanced Firewall Manager, and Application Security Manager. This prerequisite structure ensures that professionals pursuing F5 specialty certifications share a common conceptual framework regardless of which specific product track they subsequently specialize in. The 101 has been updated periodically to reflect the evolution of application delivery technology, incorporating topics like cloud-native architectures, container networking, and modern security concepts that were not relevant when the certification was first introduced.

Breaking Down the Examination Content Domains and Their Coverage Areas

The F5 101 examination covers a range of content domains that collectively span the breadth of application delivery fundamentals. The OSI model and TCP/IP protocol suite form a foundational layer of the examination content, with candidates expected to understand how each protocol layer functions, how data is encapsulated as it moves through the stack, and how common protocols including HTTP, HTTPS, DNS, FTP, and SSL/TLS operate at specific layers. This protocol knowledge is not tested at a superficial level but requires understanding of header structures, state management, connection establishment and teardown processes, and the implications of protocol behavior for application delivery design.

Network infrastructure concepts including IP addressing, subnetting, routing protocols, switching, and network address translation are covered in sufficient depth to ensure candidates understand the network environment in which application delivery controllers operate. Load balancing concepts and algorithms represent a significant portion of the examination, covering round-robin, least connections, ratio-based, priority-based, and other distribution methods along with the health monitoring mechanisms used to detect server availability and route traffic away from failed resources. Application layer concepts including HTTP methods, cookies, headers, caching principles, compression, and SSL offloading are tested because these are the application delivery mechanisms that F5 products manipulate and optimize. Security concepts including common attack types, access control principles, and basic firewall behavior round out the examination's content domains and reflect the reality that application delivery and security have become inseparable disciplines in modern network architecture.

TCP/IP Protocol Knowledge That the Examination Tests in Depth

The TCP/IP protocol suite occupies a central position in the F5 101 examination content because application delivery controllers fundamentally operate by intercepting, inspecting, and manipulating TCP/IP traffic. Candidates must understand TCP connection management in detail, including the three-way handshake process that establishes connections, the four-way handshake that terminates them, the role of sequence and acknowledgment numbers in reliable delivery, the sliding window mechanism that implements flow control, and the congestion control algorithms that TCP employs to avoid overwhelming network paths. This depth of TCP knowledge is directly relevant to F5 product functionality because BIG-IP's connection management features, including TCP profile optimization, connection mirroring, and the full proxy architecture, are built on top of these fundamental TCP behaviors.

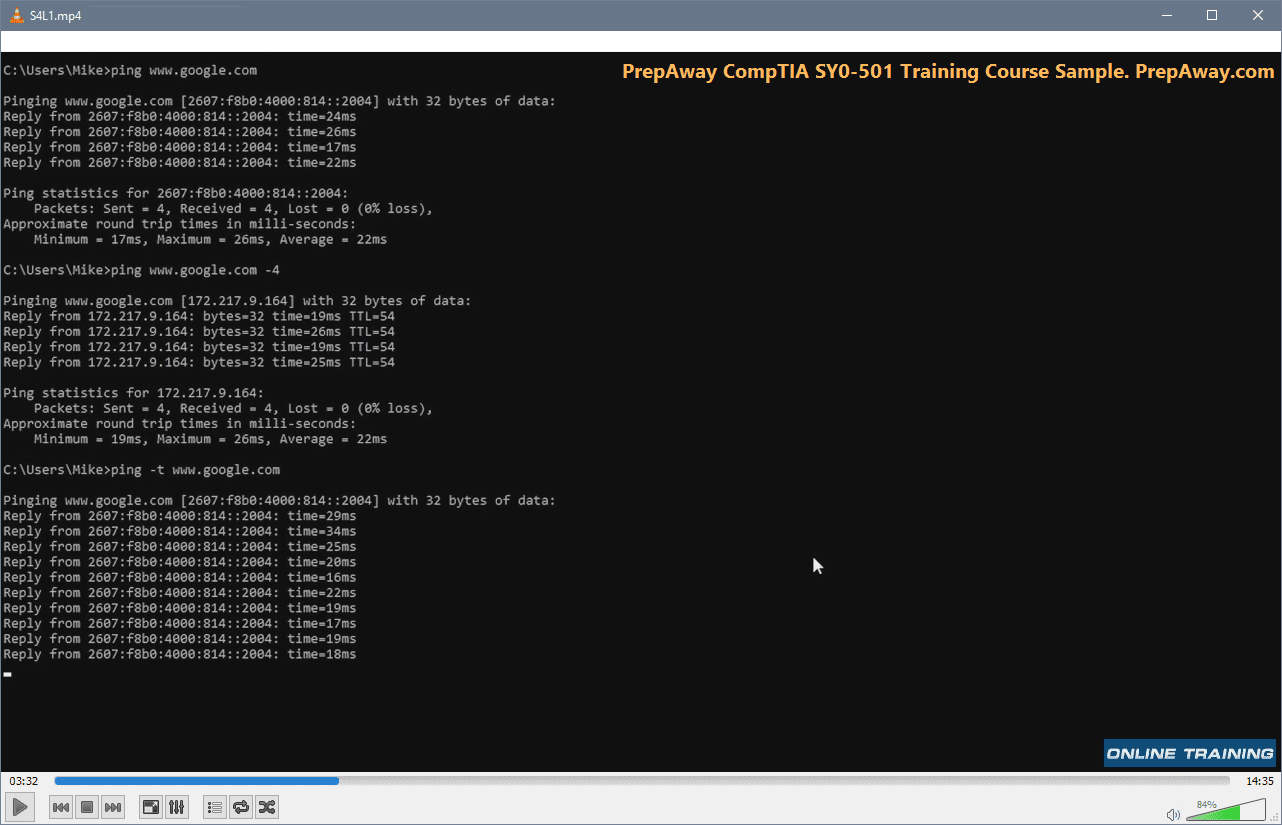

IP addressing and subnetting knowledge is tested at a level that requires genuine computational fluency rather than superficial familiarity. Candidates should be able to calculate network addresses, broadcast addresses, and valid host ranges for any given IP address and subnet mask combination, convert between dotted decimal and CIDR notation, and understand how variable length subnet masking enables efficient address allocation. IPv6 addressing concepts including address format, address types, and the coexistence mechanisms used during the transition from IPv4 are also covered in the examination. DNS protocol operation including iterative and recursive query processes, zone types, common record types, and the role of DNS in application delivery scenarios is another protocol area that receives substantial examination coverage and connects directly to F5's Global Traffic Manager and DNS product functionality.

Load Balancing Principles and Algorithms That Form the Examination Core

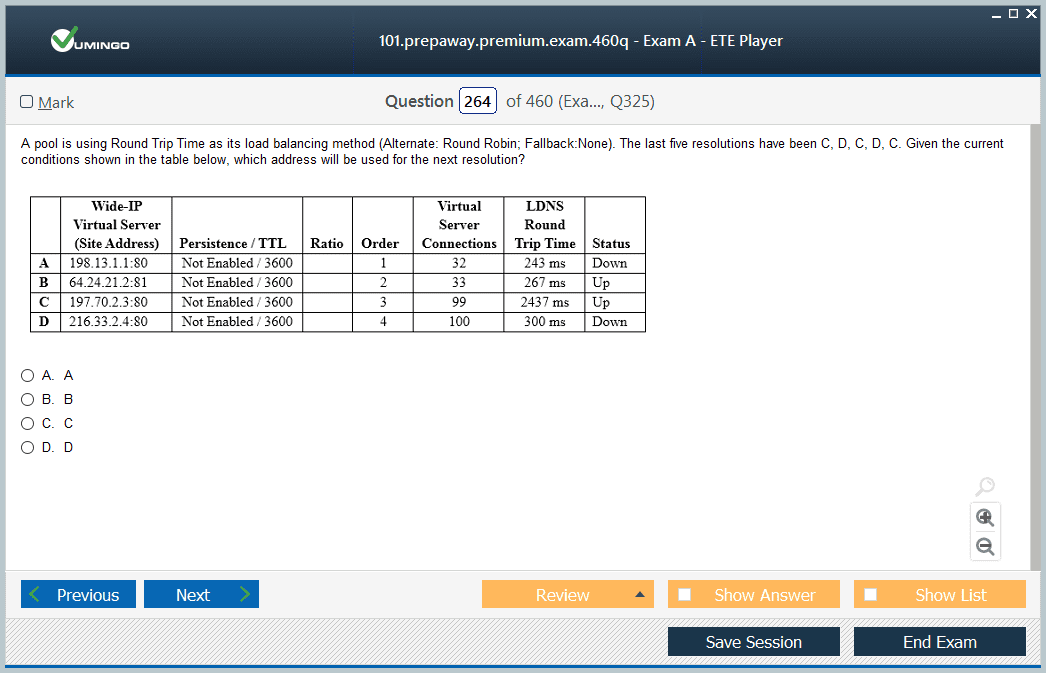

Load balancing is the foundational capability that established F5's market position and remains the conceptual heart of the F5 101 examination. Candidates must understand load balancing not merely as a traffic distribution mechanism but as a comprehensive availability and performance architecture that encompasses server health monitoring, session persistence, traffic prioritization, and connection management. The examination tests knowledge of multiple load balancing algorithms and the specific scenarios where each delivers optimal outcomes for different application types and traffic patterns.

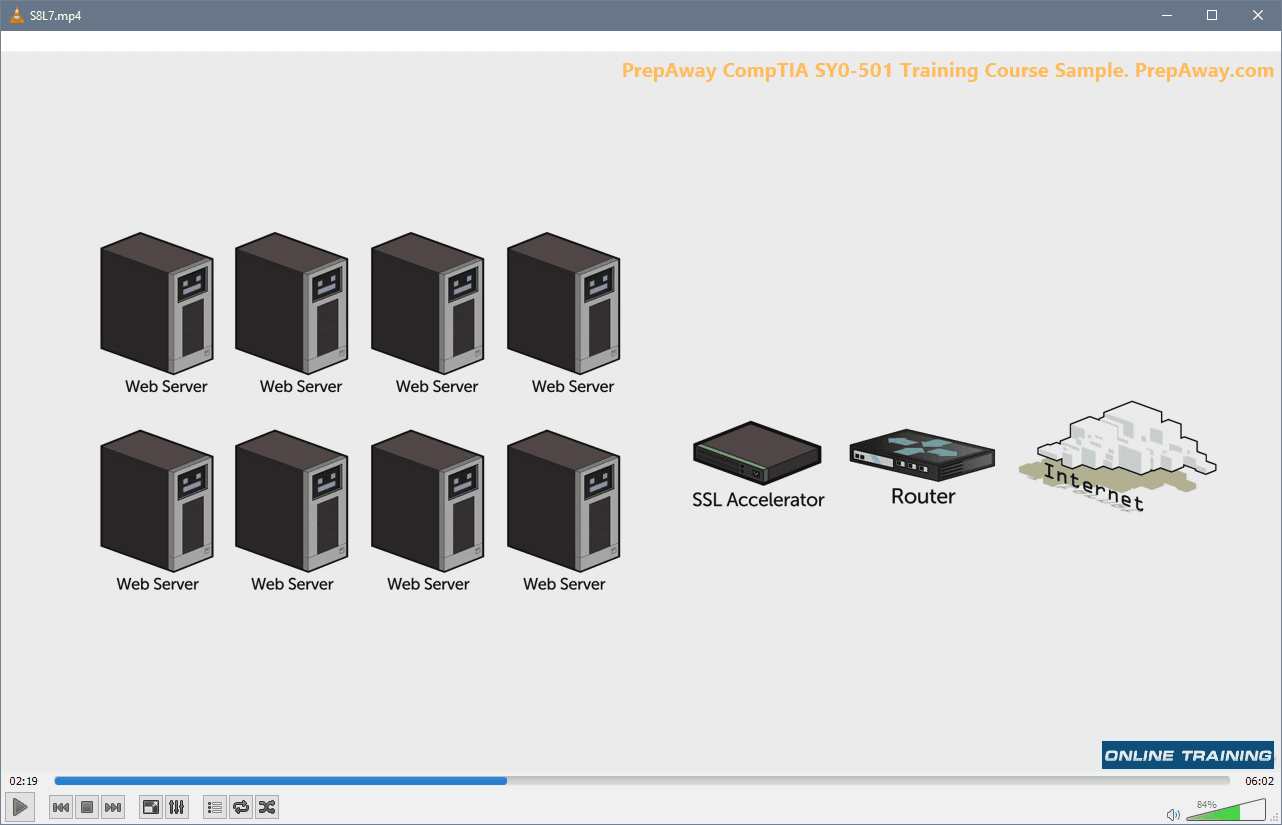

Round-robin load balancing distributes connections sequentially across available pool members and works well when server resources are approximately equal and connection processing times are relatively consistent. Least connections algorithms direct new connections to the server currently handling the fewest active connections, providing better distribution when some requests require significantly more processing time than others. Ratio-based algorithms allow administrators to assign different weights to pool members so that servers with greater processing capacity receive proportionally more traffic. Priority-based load balancing enables administrators to define groups of servers with different priority levels, directing traffic to lower-priority groups only when higher-priority members become unavailable or overloaded. Understanding when each algorithm is most appropriate requires comprehension of the underlying traffic and server characteristics that determine algorithm effectiveness, and the examination tests this applied understanding through scenario-based questions that describe specific environments and ask candidates to identify the most suitable approach.

Health Monitoring and Pool Member Management Concepts

Health monitoring is one of the most critical capabilities of any application delivery controller because the entire premise of load balancing depends on accurate knowledge of which backend servers are available and capable of processing requests. The F5 101 examination tests health monitoring concepts in meaningful depth, covering the different monitor types available, how they are configured, and how their results influence traffic distribution decisions. Understanding health monitoring at this level requires knowledge of both the technical mechanisms through which monitors operate and the practical implications of different monitoring configurations for application availability.

Simple ping monitors verify network reachability but provide no information about whether an application running on a server is actually functional. TCP monitors establish connections to specific ports and verify that the server accepts connections, providing more meaningful availability verification than ping-level monitoring. HTTP and HTTPS monitors send actual application requests and evaluate the responses to determine whether the application is genuinely serving content correctly. More sophisticated application-specific monitors can check for specific response content, validate SSL certificate validity, or execute custom scripts that simulate real user interactions with an application. The examination covers the trade-offs between monitor complexity and monitoring overhead, since more thorough monitoring provides better accuracy but consumes more processing resources and generates more network traffic. Session persistence mechanisms including cookie-based persistence, source address persistence, and SSL session ID persistence are closely related to pool management concepts and are also tested in the examination.

SSL and TLS Knowledge Required for the F5 101 Examination

SSL and TLS knowledge represents one of the more technically demanding areas of the F5 101 examination because the cryptographic concepts involved are inherently complex and the operational implications for application delivery are significant. Candidates must understand the SSL/TLS handshake process including the exchange of certificates, the negotiation of cipher suites, the establishment of session keys, and the transition from handshake to encrypted data transfer. This understanding is directly relevant to F5 BIG-IP functionality because SSL offloading, one of the most widely deployed F5 use cases, involves the BIG-IP device terminating SSL connections from clients and establishing separate connections to backend servers, removing the encryption processing burden from application servers.

Certificate management concepts including certificate authorities, certificate chains, certificate validity periods, and common name validation are covered in the examination because these concepts are fundamental to implementing SSL offloading correctly. The difference between one-way SSL authentication where only the server presents a certificate and mutual TLS authentication where both client and server present certificates is an important distinction that the examination tests. Candidates should also understand the differences between SSL protocol versions and why older versions are considered insecure, as well as the concept of cipher suite selection and how weak cipher suites can undermine the security of encrypted connections regardless of the protocol version used. The examination's coverage of SSL/TLS reflects the reality that virtually all enterprise application traffic is encrypted today and that application delivery professionals must be comfortable working with encrypted traffic at a technical level.

HTTP Protocol Fundamentals and Application Layer Concepts

HTTP protocol knowledge is essential for the F5 101 examination because application delivery controllers operate extensively at the application layer, inspecting and manipulating HTTP traffic to implement features including content switching, header modification, cookie insertion, compression, caching, and application-layer security controls. Candidates must understand HTTP as a stateless request-response protocol built on TCP connections, the structure of HTTP requests and responses including methods, status codes, headers, and message bodies, and the operational differences between HTTP versions including the persistent connection model introduced in HTTP/1.1 and the multiplexing capabilities of HTTP/2.

HTTP methods including GET, POST, PUT, DELETE, HEAD, and OPTIONS each have specific semantics that affect how application delivery controllers handle them. GET requests are idempotent and can be served from cache, while POST requests carry request bodies and typically should not be cached. These distinctions matter for application delivery configuration because features like content caching and request routing decisions often depend on method type. HTTP status codes communicate the outcome of requests and are important for health monitoring configurations that evaluate whether a server is responding correctly. Redirect codes in the 300 range, client error codes in the 400 range, and server error codes in the 500 range each have different implications for traffic management decisions. Cookie handling is another HTTP concept with direct application delivery relevance because cookie-based session persistence relies on the ability to insert, read, and rewrite cookies as they pass through an application delivery controller.

Network Address Translation and Its Role in Application Delivery

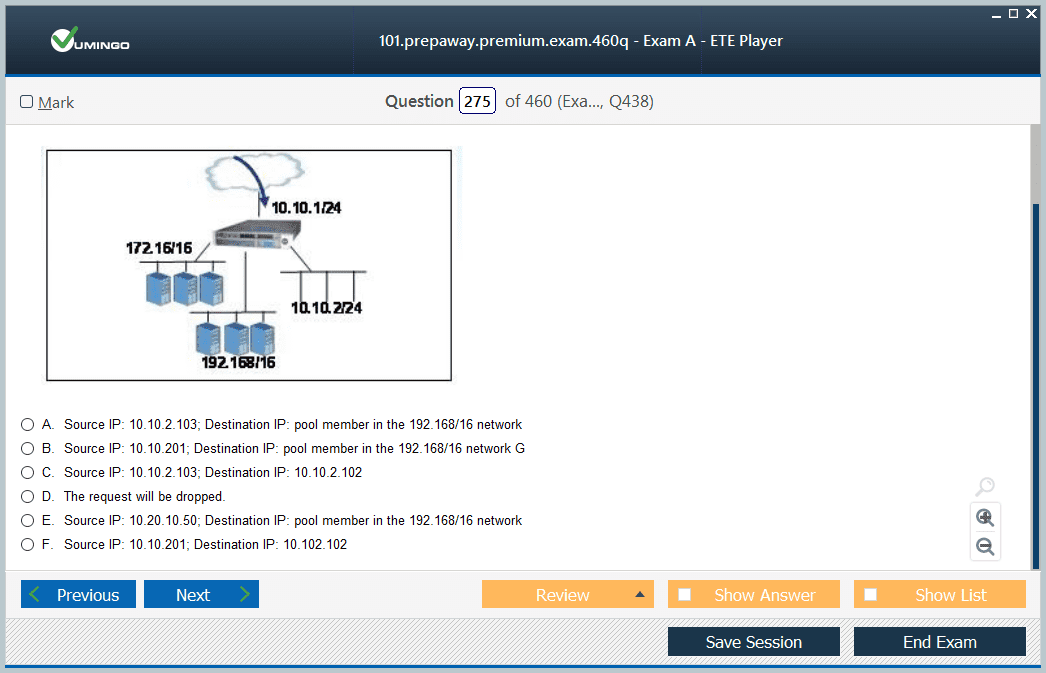

Network address translation, commonly known as NAT, is a networking mechanism with direct relevance to how application delivery controllers handle traffic flows, and the F5 101 examination covers NAT concepts with specific attention to how they apply in application delivery contexts. Source NAT, or SNAT, is a particularly important concept because it addresses a fundamental routing problem that arises in many BIG-IP deployments. When a BIG-IP device receives a client request destined for a virtual server address, it forwards the request to a backend pool member, but if the pool member responds directly to the client without routing the response through the BIG-IP device, the client receives a response from an unexpected IP address and rejects it.

SNAT solves this asymmetric routing problem by substituting the BIG-IP's own address for the client's source address when forwarding traffic to pool members, ensuring that response traffic must return through the BIG-IP device to reach the client. The examination covers different SNAT configurations including automap, which uses the BIG-IP's self IP addresses for SNAT translation, and SNAT pools, which allow administrators to define specific translation addresses for different traffic scenarios. Destination NAT concepts are also covered in the context of virtual server functionality, where the BIG-IP translates the destination address of incoming traffic from the virtual server IP address to the selected pool member's real IP address. Understanding how these NAT operations interact with routing tables, connection tables, and traffic flow paths is essential for reasoning about BIG-IP deployment architectures.

Virtual Servers, Pools, and Nodes as Foundational BIG-IP Concepts

The virtual server, pool, and node object hierarchy is the foundational architectural concept of BIG-IP Local Traffic Manager and represents one of the most important conceptual areas tested in the F5 101 examination. A virtual server is the logical entity that listens for client connections on a specific IP address and port combination and defines how matching traffic should be processed. It serves as the primary traffic management object that ties together the policies, profiles, and pool configurations that determine how the BIG-IP handles each connection. Understanding virtual server types including standard virtual servers, forwarding virtual servers, and performance layer four virtual servers is important because each type is designed for different traffic handling scenarios.

A pool is a logical grouping of backend servers, called pool members or nodes, that receive traffic from a virtual server according to the configured load balancing algorithm and health monitor settings. Pools can contain multiple members with different IP addresses and port configurations, and individual pool members can be enabled, disabled, or forced offline to control their participation in traffic distribution without removing them from the pool configuration. Nodes are the IP-level objects representing backend servers, and a single node can participate in multiple pools with different port configurations to support different applications running on the same physical server. The relationship between virtual servers, pools, and nodes defines the traffic flow path through a BIG-IP system, and candidates who develop a clear mental model of this object hierarchy will find that many examination questions about BIG-IP behavior become significantly more approachable.

iRules and Traffic Management Policy Concepts in the Examination

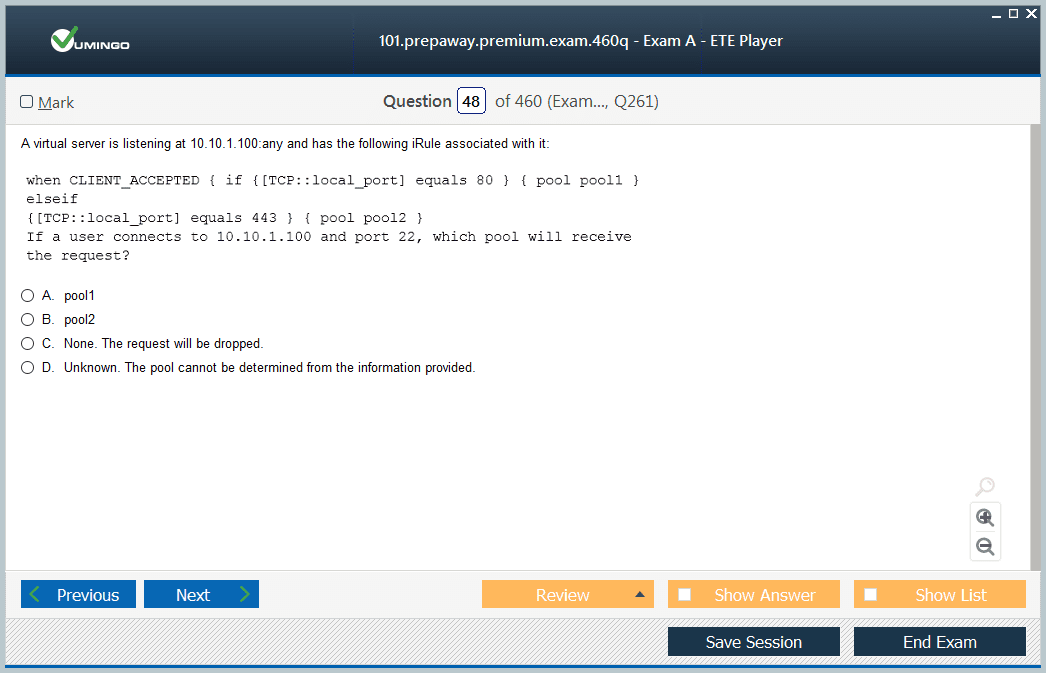

iRules are the F5 proprietary scripting language built on the industry-standard Tcl language that allows administrators to implement custom traffic management logic that goes beyond what declarative configuration can accomplish. The F5 101 examination introduces iRules concepts at a foundational level appropriate for an entry-level credential, covering the event-driven architecture that determines when iRule code executes, the common events that trigger iRule processing, and basic iRule constructs that implement common traffic management decisions. While the 101 examination does not require the ability to write complex iRules code, candidates should understand what iRules can accomplish and how they relate to other BIG-IP traffic management mechanisms.

iRule events including CLIENT_ACCEPTED, CLIENT_DATA, HTTP_REQUEST, HTTP_RESPONSE, and SERVER_CONNECTED each fire at specific points in the connection lifecycle and provide access to different traffic data and manipulation capabilities. The HTTP_REQUEST event, for example, fires after the BIG-IP receives and parses an HTTP request from a client and provides access to request headers, URI, method, and other request attributes that can be used to make routing decisions or modify the request before forwarding it to a pool member. Local Traffic Policies represent a newer declarative alternative to iRules for common use cases including URI-based routing, header manipulation, and SSL redirect enforcement. Understanding the relationship between iRules and Local Traffic Policies, including when each approach is more appropriate, is a conceptual area that the examination addresses in the context of traffic management flexibility.

Security Concepts Covered in the Application Delivery Context

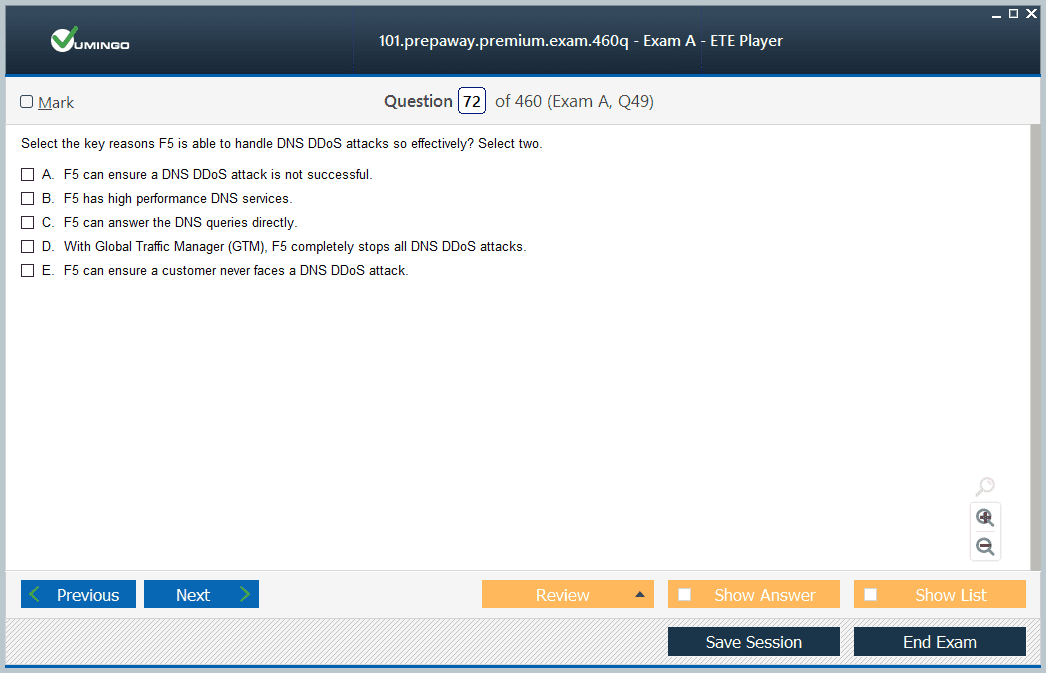

Security is an increasingly central component of application delivery architecture, and the F5 101 examination reflects this reality by covering a meaningful range of security concepts relevant to the application delivery controller role. Distributed denial of service attacks represent one of the most significant threats to application availability, and the examination covers how volumetric DDoS attacks attempt to exhaust bandwidth or connection capacity, how protocol attacks target vulnerabilities in TCP/IP implementations, and how application-layer attacks send seemingly legitimate requests at rates designed to exhaust server processing resources. Understanding these attack categories at a conceptual level is foundational for comprehending how F5 security products address them.

Application layer security concepts including SQL injection, cross-site scripting, and parameter tampering are covered in the examination because these web application attack techniques are addressed by F5's Application Security Manager product and because application delivery professionals need to understand the threats their infrastructure is designed to mitigate. Access control concepts including authentication mechanisms, authorization policies, and single sign-on principles are covered in the context of F5's Access Policy Manager functionality. SSL visibility and its importance for security inspection is another topic that connects security concepts to application delivery architecture, addressing the challenge that encrypted traffic presents for security monitoring and inspection tools that cannot see inside encrypted connections without decryption capability.

Preparing Effectively for the F5 101 Using Available Study Resources

The F5 101 examination preparation landscape includes several categories of resources that serve different learning needs and preparation styles. F5's official study guide, available through the F5 certification portal, provides a comprehensive overview of examination topics aligned directly with the official content domains and serves as the most authoritative single-source reference for what the examination tests. F5 also publishes free online training through its F5 University platform covering many of the foundational topics tested in the 101 examination, and working through the relevant courses provides structured exposure to content presented by F5's own instructional designers.

Practice examination resources from third-party providers offer exposure to the question style and difficulty level of the actual examination and help candidates identify specific knowledge gaps before their official test date. Hands-on practice using F5's BIG-IP Virtual Edition, which is available as a free trial download, provides the practical reinforcement that transforms conceptual knowledge into the kind of applied understanding that scenario-based questions test most effectively. Networking fundamentals books covering TCP/IP protocols, routing concepts, and application layer technologies supplement F5-specific materials by ensuring that the foundational networking knowledge underlying all F5 product functionality is genuinely solid. Candidates who combine official F5 materials with hands-on practice and solid networking fundamentals consistently report feeling well-prepared for the examination's scenario-based questions.

Common Preparation Mistakes and How to Avoid Them Before Exam Day

The most common mistake among F5 101 candidates is treating the examination as a pure product knowledge test and focusing preparation entirely on F5-specific configuration concepts while neglecting the networking and protocol fundamentals that constitute a substantial portion of the examination content. Candidates who arrive at the examination with strong knowledge of BIG-IP object types but weak understanding of TCP connection management, SSL handshake processes, or HTTP protocol behavior consistently find that gaps in these foundational areas cost them points across multiple examination sections simultaneously.

Another frequent preparation mistake is underestimating the scenario-based nature of examination questions. The F5 101 does not simply ask candidates to recall definitions or identify correct terminology. It presents realistic scenarios describing specific application delivery requirements or problems and asks candidates to identify the appropriate solution approach, the correct configuration concept, or the most likely cause of a described symptom. Preparing for this question style requires practicing the application of knowledge to scenarios rather than only reviewing content in isolation. Building a personal study resource that organizes key concepts by practical scenario type rather than by documentation category helps develop the applied reasoning that scenario questions reward. Candidates who combine thorough conceptual preparation with hands-on exploration of BIG-IP functionality in a lab environment arrive at the examination with the most complete preparation profile.

Conclusion

The F5 101 Application Delivery Fundamentals certification represents a genuinely valuable professional credential for networking and infrastructure professionals who work with or aspire to work with application delivery technology in enterprise, service provider, or cloud environments. The knowledge validated by the certification spans a breadth of foundational concepts from TCP/IP protocols and network addressing through load balancing algorithms and health monitoring to SSL/TLS encryption and application layer security that collectively form the intellectual foundation required to work effectively with F5 products and application delivery architecture more broadly. Earning this credential demonstrates that a professional has invested in building a structured and tested understanding of the principles that define modern application delivery.

The examination's requirement for genuine conceptual depth rather than surface-level product familiarity is what gives the F5 101 its lasting professional value. Technology products evolve, specific configuration syntax changes, and new capabilities are added with each product release. But the foundational concepts of TCP connection management, load balancing principles, SSL certificate validation, HTTP protocol behavior, and network address translation remain stable across product generations and provide a durable knowledge base that continues to support professional effectiveness long after the specific product details studied during examination preparation have been superseded by newer releases.

For professionals currently considering whether to pursue the F5 101, the credential serves multiple career purposes simultaneously. It provides a structured learning framework that fills gaps in foundational knowledge that many practitioners have developed through experience without fully systematic grounding. It creates a verified credential that signals genuine application delivery expertise to employers and clients in a market where many professionals claim familiarity with F5 technology without the ability to demonstrate tested knowledge. And it establishes the prerequisite foundation required to pursue higher-level F5 certifications in specialized tracks that command premium professional recognition and compensation in the networking industry.

The preparation journey itself delivers value independent of the examination outcome by systematically building the conceptual framework that supports every subsequent encounter with application delivery technology throughout a professional career. Candidates who invest seriously in understanding the why behind F5 product features rather than simply memorizing what those features are called emerge from the preparation process as genuinely more capable professionals regardless of the specific technologies they work with day to day.

The F5 certification pathway that begins with the 101 examination extends upward through specialist and expert level credentials that represent some of the most respected technical certifications in the networking industry. Professionals who commit to this pathway and build on the foundational knowledge of the 101 with the deeper technical expertise of higher-level certifications position themselves for the most complex and rewarding application delivery roles available in the current technology job market. The F5 101 is where that journey begins, and for professionals serious about application delivery as a career discipline, it is a beginning very much worth making.

F5 101 practice test questions and answers, training course, study guide are uploaded in ETE Files format by real users. Study and Pass 101 Application Delivery Fundamentals certification exam dumps & practice test questions and answers are to help students.

Exam Comments * The most recent comment are on top

- F5CAB1 - BIG-IP Administration Install, Initial Configuration, and Upgrade

- 201 - TMOS Administration

- F5CAB3 - BIG-IP Administration Data Plane Configuration

- 301b - BIG-IP Local Traffic Manager (LTM) Specialist: Maintain & Troubleshoot

- F5CAB5 - BIG-IP Administration Support and Troubleshooting

- F5CAB4 - BIG-IP Administration Control Plane Administration

Purchase 101 Exam Training Products Individually

Why customers love us?

What do our customers say?

The resources provided for the F5 certification exam were exceptional. The exam dumps and video courses offered clear and concise explanations of each topic. I felt thoroughly prepared for the 101 test and passed with ease.

Studying for the F5 certification exam was a breeze with the comprehensive materials from this site. The detailed study guides and accurate exam dumps helped me understand every concept. I aced the 101 exam on my first try!

I was impressed with the quality of the 101 preparation materials for the F5 certification exam. The video courses were engaging, and the study guides covered all the essential topics. These resources made a significant difference in my study routine and overall performance. I went into the exam feeling confident and well-prepared.

The 101 materials for the F5 certification exam were invaluable. They provided detailed, concise explanations for each topic, helping me grasp the entire syllabus. After studying with these resources, I was able to tackle the final test questions confidently and successfully.

Thanks to the comprehensive study guides and video courses, I aced the 101 exam. The exam dumps were spot on and helped me understand the types of questions to expect. The certification exam was much less intimidating thanks to their excellent prep materials. So, I highly recommend their services for anyone preparing for this certification exam.

Achieving my F5 certification was a seamless experience. The detailed study guide and practice questions ensured I was fully prepared for 101. The customer support was responsive and helpful throughout my journey. Highly recommend their services for anyone preparing for their certification test.

I couldn't be happier with my certification results! The study materials were comprehensive and easy to understand, making my preparation for the 101 stress-free. Using these resources, I was able to pass my exam on the first attempt. They are a must-have for anyone serious about advancing their career.

The practice exams were incredibly helpful in familiarizing me with the actual test format. I felt confident and well-prepared going into my 101 certification exam. The support and guidance provided were top-notch. I couldn't have obtained my F5 certification without these amazing tools!

The materials provided for the 101 were comprehensive and very well-structured. The practice tests were particularly useful in building my confidence and understanding the exam format. After using these materials, I felt well-prepared and was able to solve all the questions on the final test with ease. Passing the certification exam was a huge relief! I feel much more competent in my role. Thank you!

The certification prep was excellent. The content was up-to-date and aligned perfectly with the exam requirements. I appreciated the clear explanations and real-world examples that made complex topics easier to grasp. I passed 101 successfully. It was a game-changer for my career in IT!